mirror of

https://github.com/soxoj/maigret.git

synced 2026-05-09 08:04:32 +00:00

Compare commits

1 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

| 4791a6fc96 |

@@ -1,2 +0,0 @@

|

||||

#!/bin/sh

|

||||

python3 ./utils/update_site_data.py

|

||||

@@ -1,5 +1,3 @@

|

||||

# These are supported funding model platforms

|

||||

|

||||

patreon: soxoj

|

||||

github: soxoj

|

||||

buy_me_a_coffee: soxoj

|

||||

@@ -15,14 +15,10 @@ assignees: soxoj

|

||||

|

||||

## Description

|

||||

|

||||

Info about Maigret version you are running and environment (`--version`, operation system, ISP provider):

|

||||

Info about Maigret version you are running and environment (`--version`, operation system, ISP provuder):

|

||||

<INSERT VERSION INFO HERE>

|

||||

|

||||

How to reproduce this bug (commandline options / conditions):

|

||||

<INSERT EXAMPLE OF CLI COMMAND HERE>

|

||||

|

||||

<DESCRIPTION>

|

||||

|

||||

<PASTE SCREENSHOT>

|

||||

|

||||

<ATTACH LOG FILE>

|

||||

|

||||

@@ -27,7 +27,6 @@ jobs:

|

||||

with:

|

||||

push: true

|

||||

tags: ${{ secrets.DOCKER_HUB_USERNAME }}/maigret:latest

|

||||

platforms: linux/amd64,linux/arm64

|

||||

-

|

||||

name: Image digest

|

||||

run: echo ${{ steps.docker_build.outputs.digest }}

|

||||

|

||||

@@ -2,7 +2,9 @@ name: Package exe with PyInstaller - Windows

|

||||

|

||||

on:

|

||||

push:

|

||||

branches: [ main, dev ]

|

||||

branches: [ main ]

|

||||

pull_request:

|

||||

branches: [ main ]

|

||||

|

||||

jobs:

|

||||

build:

|

||||

@@ -10,13 +12,13 @@ jobs:

|

||||

runs-on: ubuntu-latest

|

||||

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

- uses: actions/checkout@v2

|

||||

- name: PyInstaller Windows

|

||||

uses: JackMcKew/pyinstaller-action-windows@main

|

||||

with:

|

||||

path: pyinstaller

|

||||

|

||||

- uses: actions/upload-artifact@v4

|

||||

- uses: actions/upload-artifact@v2

|

||||

with:

|

||||

name: maigret_standalone_win32

|

||||

path: pyinstaller/dist/windows # or path/to/artifact

|

||||

|

||||

@@ -2,7 +2,6 @@ name: Linting and testing

|

||||

|

||||

on:

|

||||

push:

|

||||

branches: [ main ]

|

||||

pull_request:

|

||||

branches: [ main ]

|

||||

types: [opened, synchronize, reopened]

|

||||

@@ -13,7 +12,7 @@ jobs:

|

||||

runs-on: ubuntu-latest

|

||||

strategy:

|

||||

matrix:

|

||||

python-version: ["3.10", "3.11", "3.12"]

|

||||

python-version: [3.6.9, 3.7, 3.8, 3.9]

|

||||

|

||||

steps:

|

||||

- uses: actions/checkout@v2

|

||||

@@ -24,8 +23,8 @@ jobs:

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

python -m pip install --upgrade pip

|

||||

python -m pip install poetry

|

||||

python -m poetry install --with dev

|

||||

python -m pip install -r test-requirements.txt

|

||||

if [ -f requirements.txt ]; then pip install -r requirements.txt; fi

|

||||

- name: Test with pytest

|

||||

run: |

|

||||

poetry run pytest --reruns 3 --reruns-delay 5

|

||||

pytest --reruns 3 --reruns-delay 5

|

||||

|

||||

@@ -2,7 +2,7 @@ name: Update sites rating and statistics

|

||||

|

||||

on:

|

||||

pull_request:

|

||||

branches: [ dev ]

|

||||

branches: [ main ]

|

||||

types: [opened, synchronize]

|

||||

|

||||

jobs:

|

||||

|

||||

@@ -1,6 +1,5 @@

|

||||

# Virtual Environment

|

||||

venv/

|

||||

.venv/

|

||||

|

||||

# Editor Configurations

|

||||

.vscode/

|

||||

@@ -16,10 +15,6 @@ src/

|

||||

.ipynb_checkpoints

|

||||

*.ipynb

|

||||

|

||||

# Logs and backups

|

||||

*.log

|

||||

*.bak

|

||||

|

||||

# Output files, except requirements.txt

|

||||

*.txt

|

||||

!requirements.txt

|

||||

@@ -39,7 +34,3 @@ htmlcov/

|

||||

|

||||

# Maigret files

|

||||

settings.json

|

||||

|

||||

# other

|

||||

*.egg-info

|

||||

build

|

||||

|

||||

@@ -1,16 +0,0 @@

|

||||

version: 2

|

||||

|

||||

build:

|

||||

os: ubuntu-22.04

|

||||

tools:

|

||||

python: "3.10"

|

||||

|

||||

sphinx:

|

||||

configuration: docs/source/conf.py

|

||||

|

||||

formats:

|

||||

- pdf

|

||||

|

||||

python:

|

||||

install:

|

||||

- requirements: docs/requirements.txt

|

||||

@@ -2,103 +2,6 @@

|

||||

|

||||

## [Unreleased]

|

||||

|

||||

## [0.4.4] - 2022-09-03

|

||||

* Fixed some false positives by @soxoj in https://github.com/soxoj/maigret/pull/433

|

||||

* Drop Python 3.6 support by @soxoj in https://github.com/soxoj/maigret/pull/434

|

||||

* Bump xhtml2pdf from 0.2.5 to 0.2.7 by @dependabot in https://github.com/soxoj/maigret/pull/409

|

||||

* Bump reportlab from 3.6.6 to 3.6.9 by @dependabot in https://github.com/soxoj/maigret/pull/403

|

||||

* Bump markupsafe from 2.0.1 to 2.1.1 by @dependabot in https://github.com/soxoj/maigret/pull/389

|

||||

* Bump pycountry from 22.1.10 to 22.3.5 by @dependabot in https://github.com/soxoj/maigret/pull/384

|

||||

* Bump pypdf2 from 1.26.0 to 1.27.4 by @dependabot in https://github.com/soxoj/maigret/pull/438

|

||||

* Update GH actions by @soxoj in https://github.com/soxoj/maigret/pull/439

|

||||

* Bump tqdm from 4.63.0 to 4.64.0 by @dependabot in https://github.com/soxoj/maigret/pull/440

|

||||

* Bump jinja2 from 3.0.3 to 3.1.1 by @dependabot in https://github.com/soxoj/maigret/pull/441

|

||||

* Bump soupsieve from 2.3.1 to 2.3.2 by @dependabot in https://github.com/soxoj/maigret/pull/436

|

||||

* Bump pypdf2 from 1.26.0 to 1.27.4 by @dependabot in https://github.com/soxoj/maigret/pull/442

|

||||

* Bump pyvis from 0.1.9 to 0.2.0 by @dependabot in https://github.com/soxoj/maigret/pull/443

|

||||

* Bump pypdf2 from 1.27.4 to 1.27.6 by @dependabot in https://github.com/soxoj/maigret/pull/448

|

||||

* Bump typing-extensions from 4.1.1 to 4.2.0 by @dependabot in https://github.com/soxoj/maigret/pull/447

|

||||

* Bump soupsieve from 2.3.2 to 2.3.2.post1 by @dependabot in https://github.com/soxoj/maigret/pull/444

|

||||

* Bump pypdf2 from 1.27.6 to 1.27.7 by @dependabot in https://github.com/soxoj/maigret/pull/449

|

||||

* Bump pypdf2 from 1.27.7 to 1.27.8 by @dependabot in https://github.com/soxoj/maigret/pull/450

|

||||

* XMind 8 report warning and some docs update by @soxoj in https://github.com/soxoj/maigret/pull/452

|

||||

* False positive fixes 24.04.22 by @soxoj in https://github.com/soxoj/maigret/pull/455

|

||||

* Bump pypdf2 from 1.27.8 to 1.27.9 by @dependabot in https://github.com/soxoj/maigret/pull/456

|

||||

* Bump pytest from 7.0.1 to 7.1.2 by @dependabot in https://github.com/soxoj/maigret/pull/457

|

||||

* Bump jinja2 from 3.1.1 to 3.1.2 by @dependabot in https://github.com/soxoj/maigret/pull/460

|

||||

* Ubisoft forums addition by @fen0s in https://github.com/soxoj/maigret/pull/461

|

||||

* Add BYOND, Figma, BeatStars by @fen0s in https://github.com/soxoj/maigret/pull/462

|

||||

* fix Figma username definition, add a bunch of sites by @fen0s in https://github.com/soxoj/maigret/pull/464

|

||||

* Bump pypdf2 from 1.27.9 to 1.27.10 by @dependabot in https://github.com/soxoj/maigret/pull/465

|

||||

* Bump pypdf2 from 1.27.10 to 1.27.12 by @dependabot in https://github.com/soxoj/maigret/pull/466

|

||||

* Sites fixes 05 05 22 by @soxoj in https://github.com/soxoj/maigret/pull/469

|

||||

* Bump pyvis from 0.2.0 to 0.2.1 by @dependabot in https://github.com/soxoj/maigret/pull/472

|

||||

* Social analyzer websites, also fixing presense strs by @fen0s in https://github.com/soxoj/maigret/pull/471

|

||||

* Updated logic of false positive risk estimating by @soxoj in https://github.com/soxoj/maigret/pull/475

|

||||

* Improved usability of external progressbar func by @soxoj in https://github.com/soxoj/maigret/pull/476

|

||||

* New sites added, some tags/rank update by @soxoj in https://github.com/soxoj/maigret/pull/477

|

||||

* Added new sites by @soxoj in https://github.com/soxoj/maigret/pull/480

|

||||

* Added new forums, updated ranks, some utils improvements by @soxoj in https://github.com/soxoj/maigret/pull/481

|

||||

* Disabled sites with false positives results by @soxoj in https://github.com/soxoj/maigret/pull/482

|

||||

* Bump certifi from 2021.10.8 to 2022.5.18.1 by @dependabot in https://github.com/soxoj/maigret/pull/488

|

||||

* Bump psutil from 5.9.0 to 5.9.1 by @dependabot in https://github.com/soxoj/maigret/pull/490

|

||||

* Bump pypdf2 from 1.27.12 to 1.28.1 by @dependabot in https://github.com/soxoj/maigret/pull/491

|

||||

* Bump pypdf2 from 1.28.1 to 1.28.2 by @dependabot in https://github.com/soxoj/maigret/pull/493

|

||||

* added and fixed some websites in data.json by @kustermariocoding in https://github.com/soxoj/maigret/pull/494

|

||||

* Bump pypdf2 from 1.28.2 to 2.0.0 by @dependabot in https://github.com/soxoj/maigret/pull/504

|

||||

* Bump pefile from 2021.9.3 to 2022.5.30 by @dependabot in https://github.com/soxoj/maigret/pull/499

|

||||

* Updated sites list, added disabled Anilist by @soxoj in https://github.com/soxoj/maigret/pull/502

|

||||

* Bump lxml from 4.8.0 to 4.9.0 by @dependabot in https://github.com/soxoj/maigret/pull/503

|

||||

* Compatibility with Python 10 by @soxoj in https://github.com/soxoj/maigret/pull/509

|

||||

* feat: add .log & .bak files to gitignore in https://github.com/soxoj/maigret/pull/511

|

||||

* fix some sites and delete abandoned by @fen0s in https://github.com/soxoj/maigret/pull/526

|

||||

* Fixesjulyfirst by @fen0s in https://github.com/soxoj/maigret/pull/533

|

||||

* yazbel, aboutcar, zhihu by @fen0s in https://github.com/soxoj/maigret/pull/531

|

||||

* Fixes july third by @fen0s in https://github.com/soxoj/maigret/pull/535

|

||||

* Update data.json by @fen0s in https://github.com/soxoj/maigret/pull/539

|

||||

* Update data.json by @fen0s in https://github.com/soxoj/maigret/pull/540

|

||||

* Bump reportlab from 3.6.9 to 3.6.11 by @dependabot in https://github.com/soxoj/maigret/pull/543

|

||||

* Bump requests from 2.27.1 to 2.28.1 by @dependabot in https://github.com/soxoj/maigret/pull/530

|

||||

* Bump pypdf2 from 2.0.0 to 2.5.0 by @dependabot in https://github.com/soxoj/maigret/pull/542

|

||||

* Bump xhtml2pdf from 0.2.7 to 0.2.8 by @dependabot in https://github.com/soxoj/maigret/pull/522

|

||||

* Bump lxml from 4.9.0 to 4.9.1 by @dependabot in https://github.com/soxoj/maigret/pull/538

|

||||

* disable yandex music + set utf8 encoding by @fen0s in https://github.com/soxoj/maigret/pull/562

|

||||

* fix false positives by @fen0s in https://github.com/soxoj/maigret/pull/577

|

||||

* disable Instagram, fix two false positives by @fen0s in https://github.com/soxoj/maigret/pull/578

|

||||

* Bump certifi from 2022.5.18.1 to 2022.6.15 by @dependabot in https://github.com/soxoj/maigret/pull/551

|

||||

* August15 by @fen0s in https://github.com/soxoj/maigret/pull/591

|

||||

* Bump pytest-httpserver from 1.0.4 to 1.0.5 by @dependabot in https://github.com/soxoj/maigret/pull/583

|

||||

* Bump typing-extensions from 4.2.0 to 4.3.0 by @dependabot in https://github.com/soxoj/maigret/pull/549

|

||||

* Bump colorama from 0.4.4 to 0.4.5 by @dependabot in https://github.com/soxoj/maigret/pull/548

|

||||

* Bump chardet from 4.0.0 to 5.0.0 by @dependabot in https://github.com/soxoj/maigret/pull/550

|

||||

* Bump cloudscraper from 1.2.60 to 1.2.63 by @dependabot in https://github.com/soxoj/maigret/pull/600

|

||||

* Bump flake8 from 4.0.1 to 5.0.4 by @dependabot in https://github.com/soxoj/maigret/pull/598

|

||||

* Bump attrs from 21.4.0 to 22.1.0 by @dependabot in https://github.com/soxoj/maigret/pull/597

|

||||

* Bump pytest-asyncio from 0.18.2 to 0.19.0 by @dependabot in https://github.com/soxoj/maigret/pull/601

|

||||

* Bump pypdf2 from 2.5.0 to 2.10.4 by @dependabot in https://github.com/soxoj/maigret/pull/606

|

||||

* Bump pytest from 7.1.2 to 7.1.3 by @dependabot in https://github.com/soxoj/maigret/pull/613

|

||||

* Update sites.md -Gitmemory.com suppression by @C3n7ral051nt4g3ncy in https://github.com/soxoj/maigret/pull/610

|

||||

* Bump cloudscraper from 1.2.63 to 1.2.64 by @dependabot in https://github.com/soxoj/maigret/pull/614

|

||||

* Bump pycountry from 22.1.10 to 22.3.5 by @dependabot in https://github.com/soxoj/maigret/pull/607

|

||||

* add ProtonMail, disable 3 broken sites by @fen0s in https://github.com/soxoj/maigret/pull/619

|

||||

* Bump tqdm from 4.64.0 to 4.64.1 by @dependabot in https://github.com/soxoj/maigret/pull/618

|

||||

|

||||

**Full Changelog**: https://github.com/soxoj/maigret/compare/v0.4.3...v0.4.4

|

||||

|

||||

## [0.4.3] - 2022-04-13

|

||||

* Added Sites to data.json by @kustermariocoding in https://github.com/soxoj/maigret/pull/386

|

||||

* added new Websites to data.json by @kustermariocoding in https://github.com/soxoj/maigret/pull/390

|

||||

* Skipped broken tests by @soxoj in https://github.com/soxoj/maigret/pull/397

|

||||

* Added new Websites to data.json by @kustermariocoding in https://github.com/soxoj/maigret/pull/401

|

||||

* Added new Websites to data.json by @kustermariocoding in https://github.com/soxoj/maigret/pull/404

|

||||

* Updated statistics by @soxoj in https://github.com/soxoj/maigret/pull/406

|

||||

* Added new Websites to data.json by @kustermariocoding in https://github.com/soxoj/maigret/pull/413

|

||||

* Disabled houzz.com, updated sites statistics by @soxoj in https://github.com/soxoj/maigret/pull/422

|

||||

* Fixed last false positives by @soxoj in https://github.com/soxoj/maigret/pull/424

|

||||

* Fixed actual false positives by @soxoj in https://github.com/soxoj/maigret/pull/431

|

||||

|

||||

**Full Changelog**: https://github.com/soxoj/maigret/compare/v0.4.2...v0.4.3

|

||||

|

||||

## [0.4.2] - 2022-03-07

|

||||

* [ImgBot] Optimize images by @imgbot in https://github.com/soxoj/maigret/pull/319

|

||||

* Bump pytest-asyncio from 0.17.0 to 0.17.1 by @dependabot in https://github.com/soxoj/maigret/pull/321

|

||||

|

||||

+1

-24

@@ -2,10 +2,6 @@

|

||||

|

||||

Hey! I'm really glad you're reading this. Maigret contains a lot of sites, and it is very hard to keep all the sites operational. That's why any fix is important.

|

||||

|

||||

## Code of Conduct

|

||||

|

||||

Please read and follow the [Code of Conduct](CODE_OF_CONDUCT.md) to foster a welcoming and inclusive community.

|

||||

|

||||

## How to add a new site

|

||||

|

||||

#### Beginner level

|

||||

@@ -31,23 +27,4 @@ Always write a clear log message for your commits. One-line messages are fine fo

|

||||

|

||||

## Coding conventions

|

||||

|

||||

### General Guidelines

|

||||

|

||||

- Try to follow [PEP 8](https://www.python.org/dev/peps/pep-0008/) for Python code style.

|

||||

- Ensure your code passes all tests before submitting a pull request.

|

||||

|

||||

### Code Style

|

||||

|

||||

- **Indentation**: Use 4 spaces per indentation level.

|

||||

- **Imports**:

|

||||

- Standard library imports should be placed at the top.

|

||||

- Third-party imports should follow.

|

||||

- Group imports logically.

|

||||

|

||||

### Naming Conventions

|

||||

|

||||

- **Variables and Functions**: Use `snake_case`.

|

||||

- **Classes**: Use `CamelCase`.

|

||||

- **Constants**: Use `UPPER_CASE`.

|

||||

|

||||

Start reading the code and you'll get the hang of it. ;)

|

||||

Start reading the code and you'll get the hang of it. ;)

|

||||

|

||||

+10

-10

@@ -1,16 +1,16 @@

|

||||

FROM python:3.10-slim

|

||||

LABEL maintainer="Soxoj <soxoj@protonmail.com>"

|

||||

FROM python:3.9-slim

|

||||

MAINTAINER Soxoj <soxoj@protonmail.com>

|

||||

WORKDIR /app

|

||||

RUN pip install --no-cache-dir --upgrade pip

|

||||

RUN apt-get update && \

|

||||

apt-get install --no-install-recommends -y \

|

||||

RUN pip install --upgrade pip

|

||||

RUN apt update && \

|

||||

apt install -y \

|

||||

gcc \

|

||||

musl-dev \

|

||||

libxml2 \

|

||||

libxml2-dev \

|

||||

libxslt-dev \

|

||||

&& \

|

||||

rm -rf /var/lib/apt/lists/* /tmp/*

|

||||

COPY . .

|

||||

RUN YARL_NO_EXTENSIONS=1 python3 -m pip install --no-cache-dir .

|

||||

libxslt-dev

|

||||

RUN apt clean \

|

||||

&& rm -rf /var/lib/apt/lists/* /tmp/*

|

||||

ADD . .

|

||||

RUN YARL_NO_EXTENSIONS=1 python3 -m pip install .

|

||||

ENTRYPOINT ["maigret"]

|

||||

|

||||

-128

@@ -1,128 +0,0 @@

|

||||

@echo off

|

||||

|

||||

REM check if running as admin

|

||||

|

||||

goto check_Permissions

|

||||

|

||||

:check_Permissions

|

||||

echo Administrative permissions required. Detecting permissions...

|

||||

|

||||

net session >nul 2>&1

|

||||

if %errorLevel% == 0 (

|

||||

goto 1

|

||||

) else (

|

||||

cls

|

||||

echo Failure: You MUST run this as administator, otherwise commands will fail.

|

||||

)

|

||||

|

||||

pause >nul

|

||||

|

||||

|

||||

|

||||

REM Step 2: Check if Python and pip3 are installed

|

||||

python --version >nul 2>&1

|

||||

if %errorlevel% neq 0 (

|

||||

echo Python is not installed. Please install Python 3.8 or higher.

|

||||

pause

|

||||

exit /b

|

||||

)

|

||||

|

||||

pip3 --version >nul 2>&1

|

||||

if %errorlevel% neq 0 (

|

||||

echo pip3 is not installed. Please install pip3.

|

||||

pause

|

||||

exit /b

|

||||

)

|

||||

|

||||

REM Step 3: Check Python version

|

||||

python -c "import sys; exit(0) if sys.version_info >= (3,8) else exit(1)"

|

||||

if %errorlevel% neq 0 (

|

||||

echo Python version 3.8 or higher is required.

|

||||

pause

|

||||

exit /b

|

||||

)

|

||||

|

||||

|

||||

:1

|

||||

cls

|

||||

:::===============================================================

|

||||

::: ______ __ __ _ _

|

||||

::: | ____| | \/ | (_) | |

|

||||

::: | |__ __ _ ___ _ _ | \ / | __ _ _ __ _ _ __ ___| |_

|

||||

::: | __| / _` / __| | | | | |\/| |/ _` | |/ _` | '__/ _ \ __|

|

||||

::: | |___| (_| \__ \ |_| | | | | | (_| | | (_| | | | __/ |_

|

||||

::: |______\__,_|___/\__, | |_| |_|\__,_|_|\__, |_| \___|\__|

|

||||

::: __/ | __/ |

|

||||

::: |___/ |___/

|

||||

:::

|

||||

:::===============================================================

|

||||

echo.

|

||||

for /f "delims=: tokens=*" %%A in ('findstr /b ::: "%~f0"') do @echo(%%A

|

||||

echo.

|

||||

echo ----------------------------------------------------------------

|

||||

echo Python 3.8 or higher and pip3 required.

|

||||

echo ----------------------------------------------------------------

|

||||

echo Press [I] to begin installation.

|

||||

echo Press [R] If already installed.

|

||||

echo ----------------------------------------------------------------

|

||||

choice /c IR

|

||||

if %errorlevel%==1 goto install1

|

||||

if %errorlevel%==2 goto after

|

||||

|

||||

:install1

|

||||

cls

|

||||

echo ========================================================

|

||||

echo Maigret Installation Script

|

||||

echo ========================================================

|

||||

echo.

|

||||

echo --------------------------------------------------------

|

||||

echo If your pip installation is outdated, it could cause

|

||||

echo cryptography to fail on installation.

|

||||

echo --------------------------------------------------------

|

||||

echo check for and install pip updates now?

|

||||

echo --------------------------------------------------------

|

||||

choice /c YN

|

||||

if %errorlevel%==1 goto install2

|

||||

if %errorlevel%==2 goto install3

|

||||

|

||||

:install2

|

||||

cls

|

||||

python -m pip install --upgrade pip

|

||||

goto:install3

|

||||

|

||||

:install3

|

||||

cls

|

||||

echo ========================================================

|

||||

echo Maigret Installation Script

|

||||

echo ========================================================

|

||||

echo.

|

||||

echo --------------------------------------------------------

|

||||

echo Install requirements and maigret?

|

||||

echo --------------------------------------------------------

|

||||

choice /c YN

|

||||

if %errorlevel%==1 goto install4

|

||||

if %errorlevel%==2 goto 1

|

||||

|

||||

:install4

|

||||

cls

|

||||

pip install .

|

||||

pip install maigret

|

||||

goto:after

|

||||

|

||||

:after

|

||||

cls

|

||||

echo ========================================================

|

||||

echo Maigret Background Search

|

||||

echo ========================================================

|

||||

echo.

|

||||

echo --------------------------------------------------------

|

||||

echo Please Enter Username / Email

|

||||

echo --------------------------------------------------------

|

||||

set /p input=

|

||||

maigret %input%

|

||||

echo.

|

||||

echo.

|

||||

echo.

|

||||

echo.

|

||||

pause

|

||||

goto:after

|

||||

@@ -10,16 +10,16 @@ rerun-tests:

|

||||

|

||||

lint:

|

||||

@echo 'syntax errors or undefined names'

|

||||

flake8 --count --select=E9,F63,F7,F82 --show-source --statistics ${LINT_FILES}

|

||||

flake8 --count --select=E9,F63,F7,F82 --show-source --statistics ${LINT_FILES} maigret.py

|

||||

|

||||

@echo 'warning'

|

||||

flake8 --count --exit-zero --max-complexity=10 --max-line-length=127 --statistics --ignore=E731,W503,E501 ${LINT_FILES}

|

||||

flake8 --count --exit-zero --max-complexity=10 --max-line-length=127 --statistics --ignore=E731,W503,E501 ${LINT_FILES} maigret.py

|

||||

|

||||

@echo 'mypy'

|

||||

mypy --check-untyped-defs ${LINT_FILES}

|

||||

mypy ${LINT_FILES}

|

||||

|

||||

speed:

|

||||

time python3 -m maigret --version

|

||||

time python3 ./maigret.py --version

|

||||

python3 -c "import timeit; t = timeit.Timer('import maigret'); print(t.timeit(number = 1000000))"

|

||||

python3 -X importtime -c "import maigret" 2> maigret-import.log

|

||||

python3 -m tuna maigret-import.log

|

||||

|

||||

@@ -3,35 +3,27 @@

|

||||

<p align="center">

|

||||

<p align="center">

|

||||

<a href="https://pypi.org/project/maigret/">

|

||||

<img alt="PyPI version badge for Maigret" src="https://img.shields.io/pypi/v/maigret?style=flat-square" />

|

||||

<img alt="PyPI" src="https://img.shields.io/pypi/v/maigret?style=flat-square">

|

||||

</a>

|

||||

<a href="https://pypi.org/project/maigret/">

|

||||

<img alt="PyPI download count for Maigret" src="https://img.shields.io/pypi/dw/maigret?style=flat-square" />

|

||||

<a href="https://pypi.org/project/maigret/">

|

||||

<img alt="PyPI - Downloads" src="https://img.shields.io/pypi/dw/maigret?style=flat-square">

|

||||

</a>

|

||||

<a href="https://github.com/soxoj/maigret">

|

||||

<img alt="Minimum Python version required: 3.10+" src="https://img.shields.io/badge/Python-3.10%2B-brightgreen?style=flat-square" />

|

||||

</a>

|

||||

<a href="https://github.com/soxoj/maigret/blob/main/LICENSE">

|

||||

<img alt="License badge for Maigret" src="https://img.shields.io/github/license/soxoj/maigret?style=flat-square" />

|

||||

</a>

|

||||

<a href="https://github.com/soxoj/maigret">

|

||||

<img alt="View count for Maigret project" src="https://komarev.com/ghpvc/?username=maigret&color=brightgreen&label=views&style=flat-square" />

|

||||

<a href="https://pypi.org/project/maigret/">

|

||||

<img alt="Views" src="https://komarev.com/ghpvc/?username=maigret&color=brightgreen&label=views&style=flat-square">

|

||||

</a>

|

||||

</p>

|

||||

<p align="center">

|

||||

<img src="https://raw.githubusercontent.com/soxoj/maigret/main/static/maigret.png" height="300"/>

|

||||

<img src="https://raw.githubusercontent.com/soxoj/maigret/main/static/maigret.png" height="200"/>

|

||||

</p>

|

||||

</p>

|

||||

|

||||

<i>The Commissioner Jules Maigret is a fictional French police detective, created by Georges Simenon. His investigation method is based on understanding the personality of different people and their interactions.</i>

|

||||

|

||||

<b>👉👉👉 [Online Telegram bot](https://t.me/osint_maigret_bot)</b>

|

||||

|

||||

## About

|

||||

|

||||

**Maigret** collects a dossier on a person **by username only**, checking for accounts on a huge number of sites and gathering all the available information from web pages. No API keys required. Maigret is an easy-to-use and powerful fork of [Sherlock](https://github.com/sherlock-project/sherlock).

|

||||

**Maigret** collect a dossier on a person **by username only**, checking for accounts on a huge number of sites and gathering all the available information from web pages. No API keys required. Maigret is an easy-to-use and powerful fork of [Sherlock](https://github.com/sherlock-project/sherlock).

|

||||

|

||||

Currently supported more than 3000 sites ([full list](https://github.com/soxoj/maigret/blob/main/sites.md)), search is launched against 500 popular sites in descending order of popularity by default. Also supported checking of Tor sites, I2P sites, and domains (via DNS resolving).

|

||||

Currently supported more than 2500 sites ([full list](https://github.com/soxoj/maigret/blob/main/sites.md)), search is launched against 500 popular sites in descending order of popularity by default. Also supported checking of Tor sites, I2P sites, and domains (via DNS resolving).

|

||||

|

||||

## Main features

|

||||

|

||||

@@ -45,28 +37,30 @@ See full description of Maigret features [in the documentation](https://maigret.

|

||||

|

||||

## Installation

|

||||

|

||||

‼️ Maigret is available online via [official Telegram bot](https://t.me/osint_maigret_bot).

|

||||

|

||||

Maigret can be installed using pip, Docker, or simply can be launched from the cloned repo.

|

||||

|

||||

Standalone EXE-binaries for Windows are located in [Releases section](https://github.com/soxoj/maigret/releases) of GitHub repository.

|

||||

|

||||

Also, you can run Maigret using cloud shells and Jupyter notebooks (see buttons below).

|

||||

Also you can run Maigret using cloud shells and Jupyter notebooks (see buttons below).

|

||||

|

||||

[](https://console.cloud.google.com/cloudshell/open?git_repo=https://github.com/soxoj/maigret&tutorial=README.md)

|

||||

<a href="https://repl.it/github/soxoj/maigret"><img src="https://replit.com/badge/github/soxoj/maigret" alt="Run on Replit" height="50"></a>

|

||||

<a href="https://repl.it/github/soxoj/maigret"><img src="https://user-images.githubusercontent.com/27065646/92304596-bf719b00-ef7f-11ea-987f-2c1f3c323088.png" alt="Run on Repl.it" height="50"></a>

|

||||

|

||||

<a href="https://colab.research.google.com/gist/soxoj/879b51bc3b2f8b695abb054090645000/maigret-collab.ipynb"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab" height="45"></a>

|

||||

<a href="https://mybinder.org/v2/gist/soxoj/9d65c2f4d3bec5dd25949197ea73cf3a/HEAD"><img src="https://mybinder.org/badge_logo.svg" alt="Open In Binder" height="45"></a>

|

||||

|

||||

### Package installing

|

||||

|

||||

**NOTE**: Python 3.10 or higher and pip is required, **Python 3.11 is recommended.**

|

||||

**NOTE**: Python 3.6 or higher and pip is required, **Python 3.8 is recommended.**

|

||||

|

||||

```bash

|

||||

# install from pypi

|

||||

pip3 install maigret

|

||||

|

||||

# or clone and install manually

|

||||

git clone https://github.com/soxoj/maigret && cd maigret

|

||||

pip3 install .

|

||||

|

||||

# usage

|

||||

maigret username

|

||||

```

|

||||

@@ -74,14 +68,11 @@ maigret username

|

||||

### Cloning a repository

|

||||

|

||||

```bash

|

||||

# or clone and install manually

|

||||

git clone https://github.com/soxoj/maigret && cd maigret

|

||||

|

||||

# build and install

|

||||

pip3 install .

|

||||

pip3 install -r requirements.txt

|

||||

|

||||

# usage

|

||||

maigret username

|

||||

./maigret.py username

|

||||

```

|

||||

|

||||

### Docker

|

||||

@@ -91,7 +82,7 @@ maigret username

|

||||

docker pull soxoj/maigret

|

||||

|

||||

# usage

|

||||

docker run -v /mydir:/app/reports soxoj/maigret:latest username --html

|

||||

docker run soxoj/maigret:latest username

|

||||

|

||||

# manual build

|

||||

docker build -t maigret .

|

||||

@@ -100,62 +91,32 @@ docker build -t maigret .

|

||||

## Usage examples

|

||||

|

||||

```bash

|

||||

# make HTML, PDF, and Xmind8 reports

|

||||

maigret user --html

|

||||

maigret user --pdf

|

||||

maigret user --xmind #Output not compatible with xmind 2022+

|

||||

# make HTML and PDF reports

|

||||

maigret user --html --pdf

|

||||

|

||||

# search on sites marked with tags photo & dating

|

||||

maigret user --tags photo,dating

|

||||

|

||||

# search on sites marked with tag us

|

||||

maigret user --tags us

|

||||

|

||||

# search for three usernames on all available sites

|

||||

maigret user1 user2 user3 -a

|

||||

```

|

||||

|

||||

Use `maigret --help` to get full options description. Also options [are documented](https://maigret.readthedocs.io/en/latest/command-line-options.html).

|

||||

|

||||

## Contributing

|

||||

|

||||

Maigret has open-source code, so you may contribute your own sites by adding them to `data.json` file, or bring changes to it's code!

|

||||

|

||||

For more information about development and contribution, please read the [development documentation](https://maigret.readthedocs.io/en/latest/development.html).

|

||||

|

||||

## Demo with page parsing and recursive username search

|

||||

|

||||

### Video (asciinema)

|

||||

|

||||

<a href="https://asciinema.org/a/Ao0y7N0TTxpS0pisoprQJdylZ">

|

||||

<img src="https://asciinema.org/a/Ao0y7N0TTxpS0pisoprQJdylZ.svg" alt="asciicast" width="600">

|

||||

</a>

|

||||

|

||||

### Reports

|

||||

|

||||

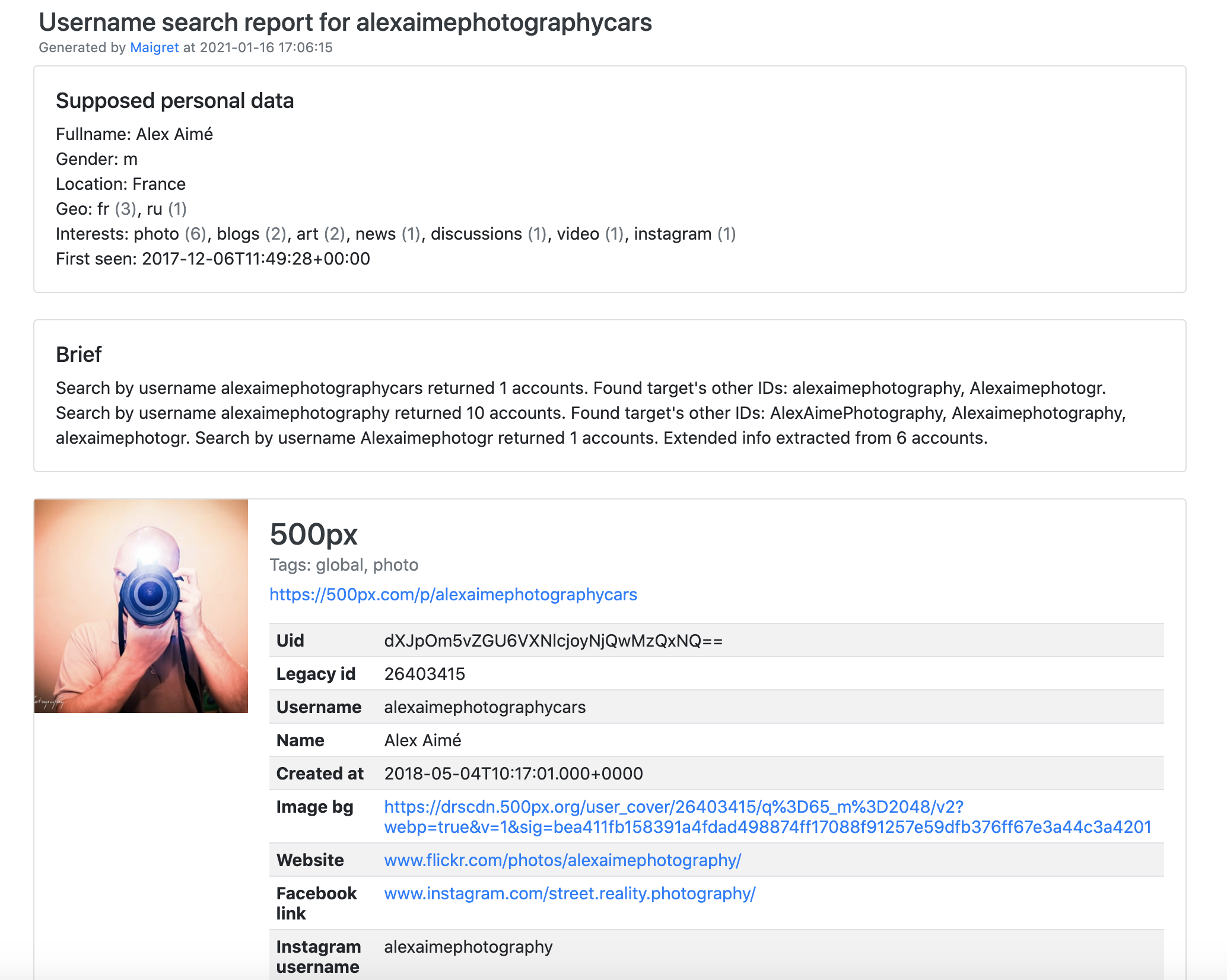

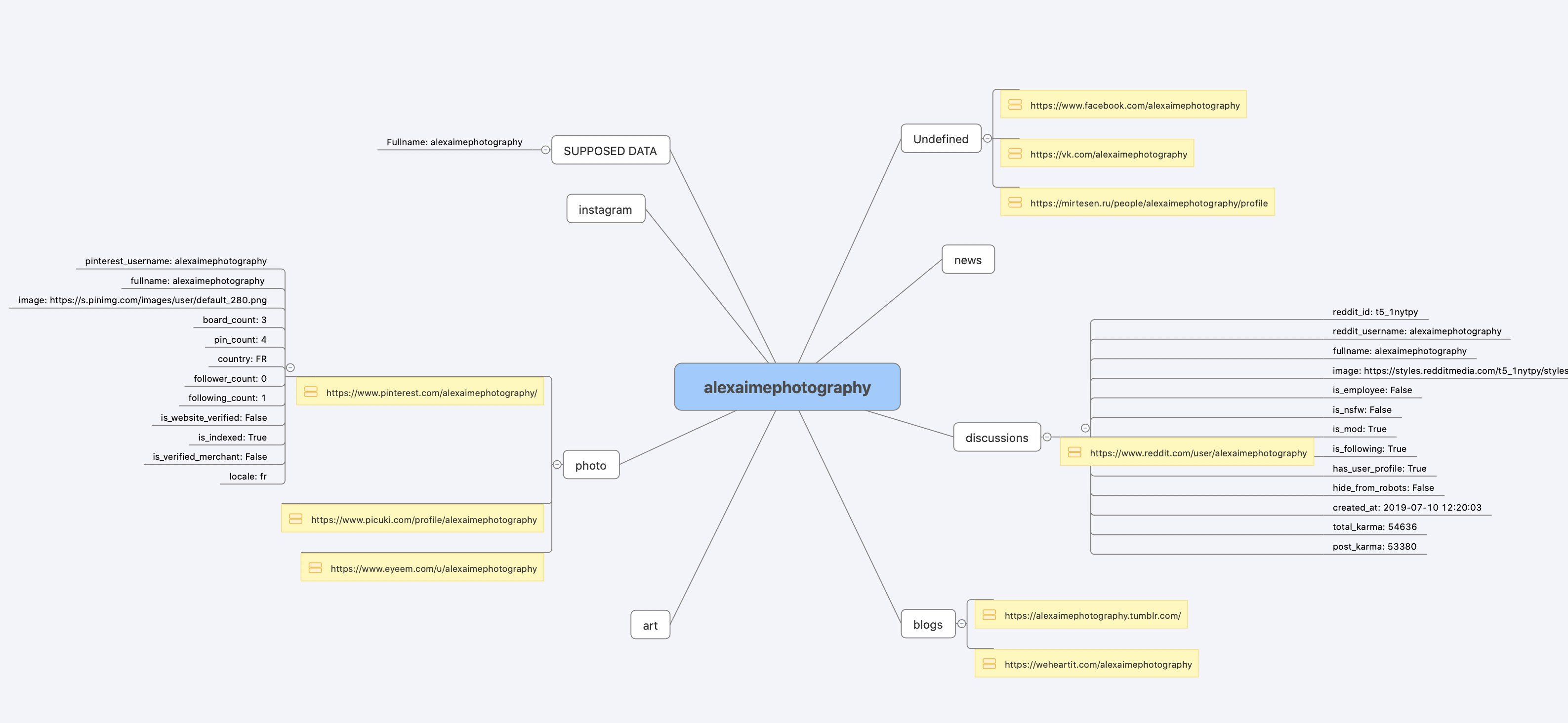

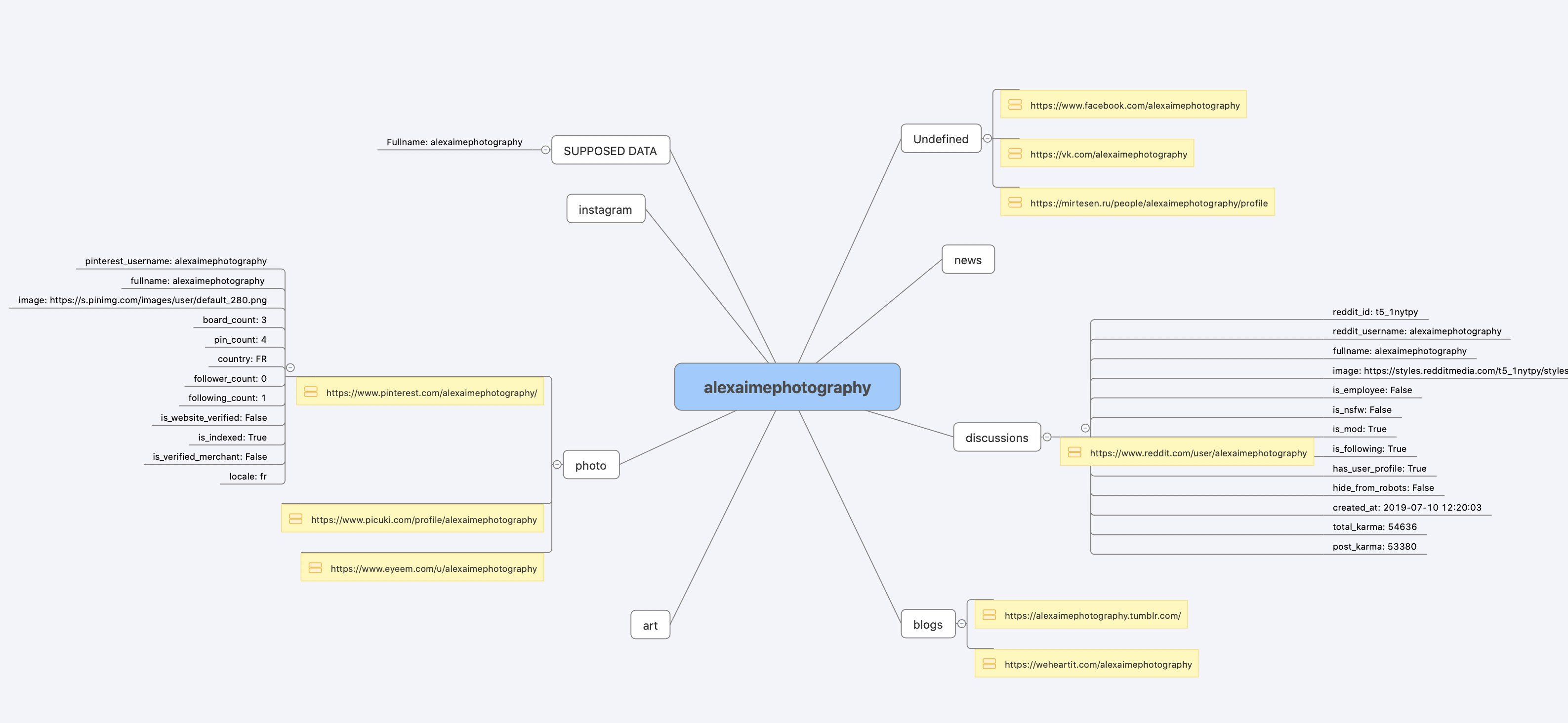

[PDF report](https://raw.githubusercontent.com/soxoj/maigret/main/static/report_alexaimephotographycars.pdf), [HTML report](https://htmlpreview.github.io/?https://raw.githubusercontent.com/soxoj/maigret/main/static/report_alexaimephotographycars.html)

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

[Full console output](https://raw.githubusercontent.com/soxoj/maigret/main/static/recursive_search.md)

|

||||

|

||||

## Disclaimer

|

||||

|

||||

**This tool is intended for educational and lawful purposes only.** The developers do not endorse or encourage any illegal activities or misuse of this tool. Regulations regarding the collection and use of personal data vary by country and region, including but not limited to GDPR in the EU, CCPA in the USA, and similar laws worldwide.

|

||||

|

||||

It is your sole responsibility to ensure that your use of this tool complies with all applicable laws and regulations in your jurisdiction. Any illegal use of this tool is strictly prohibited, and you are fully accountable for your actions.

|

||||

|

||||

The authors and developers of this tool bear no responsibility for any misuse or unlawful activities conducted by its users.

|

||||

|

||||

## SOWEL classification

|

||||

|

||||

This tool uses the following OSINT techniques:

|

||||

- [SOTL-2.2. Search For Accounts On Other Platforms](https://sowel.soxoj.com/other-platform-accounts)

|

||||

- [SOTL-6.1. Check Logins Reuse To Find Another Account](https://sowel.soxoj.com/logins-reuse)

|

||||

- [SOTL-6.2. Check Nicknames Reuse To Find Another Account](https://sowel.soxoj.com/nicknames-reuse)

|

||||

|

||||

## License

|

||||

|

||||

MIT © [Maigret](https://github.com/soxoj/maigret)<br/>

|

||||

|

||||

Executable

+18

@@ -0,0 +1,18 @@

|

||||

#!/usr/bin/env python3

|

||||

import asyncio

|

||||

import sys

|

||||

|

||||

from maigret.maigret import main

|

||||

|

||||

|

||||

def run():

|

||||

try:

|

||||

loop = asyncio.get_event_loop()

|

||||

loop.run_until_complete(main())

|

||||

except KeyboardInterrupt:

|

||||

print('Maigret is interrupted.')

|

||||

sys.exit(1)

|

||||

|

||||

|

||||

if __name__ == "__main__":

|

||||

run()

|

||||

+1

-1

@@ -10,4 +10,4 @@

|

||||

pixabay.com FALSE / FALSE 0 anonymous_user_id c1e4ee09-5674-4252-aa94-8c47b1ea80ab

|

||||

pixabay.com FALSE / FALSE 1647214439 csrftoken vfetTSvIul7gBlURt6s985JNM18GCdEwN5MWMKqX4yI73xoPgEj42dbNefjGx5fr

|

||||

pixabay.com FALSE / FALSE 1647300839 client_width 1680

|

||||

pixabay.com FALSE / FALSE 748111764839 is_human 1

|

||||

pixabay.com FALSE / FALSE 748111764839 is_human 1

|

||||

|

||||

@@ -1,2 +1 @@

|

||||

sphinx-copybutton

|

||||

sphinx_rtd_theme

|

||||

@@ -18,7 +18,7 @@ Parsing of account pages and online documents

|

||||

|

||||

Maigret will try to extract information about the document/account owner

|

||||

(including username and other ids) and will make a search by the

|

||||

extracted username and ids. See examples in the :ref:`extracting-information-from-pages` section.

|

||||

extracted username and ids. :doc:`Examples <extracting-information-from-pages>`.

|

||||

|

||||

Main options

|

||||

------------

|

||||

@@ -27,9 +27,9 @@ Options are also configurable through settings files, see

|

||||

:doc:`settings section <settings>`.

|

||||

|

||||

``--tags`` - Filter sites for searching by tags: sites categories and

|

||||

two-letter country codes (**not a language!**). E.g. photo, dating, sport; jp, us, global.

|

||||

Multiple tags can be associated with one site. **Warning**: tags markup is

|

||||

not stable now. Read more :doc:`in the separate section <tags>`.

|

||||

two-letter country codes. E.g. photo, dating, sport; jp, us, global.

|

||||

Multiple tags can be associated with one site. **Warning: tags markup is

|

||||

not stable now.**

|

||||

|

||||

``-n``, ``--max-connections`` - Allowed number of concurrent connections

|

||||

**(default: 100)**.

|

||||

|

||||

+3

-3

@@ -3,11 +3,11 @@

|

||||

# -- Project information

|

||||

|

||||

project = 'Maigret'

|

||||

copyright = '2024, soxoj'

|

||||

copyright = '2021, soxoj'

|

||||

author = 'soxoj'

|

||||

|

||||

release = '0.4.4'

|

||||

version = '0.4.4'

|

||||

release = '0.4.2'

|

||||

version = '0.4.2'

|

||||

|

||||

# -- General configuration

|

||||

|

||||

|

||||

+9

-108

@@ -3,37 +3,16 @@

|

||||

Development

|

||||

==============

|

||||

|

||||

Frequently Asked Questions

|

||||

--------------------------

|

||||

|

||||

1. Where to find the list of supported sites?

|

||||

|

||||

The human-readable list of supported sites is available in the `sites.md <https://github.com/soxoj/maigret/blob/main/sites.md>`_ file in the repository.

|

||||

It's been generated automatically from the main JSON file with the list of supported sites.

|

||||

|

||||

The machine-readable JSON file with the list of supported sites is available in the

|

||||

`data.json <https://github.com/soxoj/maigret/blob/main/maigret/resources/data.json>`_ file in the directory `resources`.

|

||||

|

||||

2. Which methods to check the account presence are supported?

|

||||

|

||||

The supported methods (``checkType`` values in ``data.json``) are:

|

||||

|

||||

- ``message`` - the most reliable method, checks if any string from ``presenceStrs`` is present and none of the strings from ``absenceStrs`` are present in the HTML response

|

||||

- ``status_code`` - checks that status code of the response is 2XX

|

||||

- ``response_url`` - check if there is not redirect and the response is 2XX

|

||||

|

||||

See the details of check mechanisms in the `checking.py <https://github.com/soxoj/maigret/blob/main/maigret/checking.py#L339>`_ file.

|

||||

|

||||

Testing

|

||||

-------

|

||||

|

||||

It is recommended use Python 3.10 for testing.

|

||||

It is recommended use Python 3.7/3.8 for test due to some conflicts in 3.9.

|

||||

|

||||

Install test requirements:

|

||||

|

||||

.. code-block:: console

|

||||

|

||||

poetry install --with dev

|

||||

pip install -r test-requirements.txt

|

||||

|

||||

|

||||

Use the following commands to check Maigret:

|

||||

@@ -41,74 +20,19 @@ Use the following commands to check Maigret:

|

||||

.. code-block:: console

|

||||

|

||||

# run linter and typing checks

|

||||

# order of checks:

|

||||

# order of checks%

|

||||

# - critical syntax errors or undefined names

|

||||

# - flake checks

|

||||

# - mypy checks

|

||||

make lint

|

||||

|

||||

# run testing with coverage html report

|

||||

# current test coverage is 58%

|

||||

make test

|

||||

# current test coverage is 60%

|

||||

make text

|

||||

|

||||

# open html report

|

||||

open htmlcov/index.html

|

||||

|

||||

# get flamechart of imports to estimate startup time

|

||||

make speed

|

||||

|

||||

|

||||

How to fix false-positives

|

||||

-----------------------------------------------

|

||||

|

||||

If you want to work with sites database, don't forget to activate statistics update git hook, command for it would look like this: ``git config --local core.hooksPath .githooks/``.

|

||||

|

||||

You should make your git commits from your maigret git repo folder, or else the hook wouldn't find the statistics update script.

|

||||

|

||||

1. Determine the problematic site.

|

||||

|

||||

If you already know which site has a false-positive and want to fix it specifically, go to the next step.

|

||||

|

||||

Otherwise, simply run a search with a random username (e.g. `laiuhi3h4gi3u4hgt`) and check the results.

|

||||

Alternatively, you can use `the Telegram bot <https://t.me/osint_maigret_bot>`_.

|

||||

|

||||

2. Open the account link in your browser and check:

|

||||

|

||||

- If the site is completely gone, remove it from the list

|

||||

- If the site still works but looks different, update in data.json how we check it

|

||||

- If the site requires login to view profiles, disable checking it

|

||||

|

||||

3. Find the site in the `data.json <https://github.com/soxoj/maigret/blob/main/maigret/resources/data.json>`_ file.

|

||||

|

||||

If the ``checkType`` method is not ``message`` and you are going to fix check, update it:

|

||||

- put ``message`` in ``checkType``

|

||||

- put in ``absenceStrs`` a keyword that is present in the HTML response for an non-existing account

|

||||

- put in ``presenceStrs`` a keyword that is present in the HTML response for an existing account

|

||||

|

||||

If you have trouble determining the right keywords, you can use automatic detection by passing the account URL with the ``--submit`` option:

|

||||

|

||||

.. code-block:: console

|

||||

|

||||

maigret --submit https://my.mail.ru/bk/alex

|

||||

|

||||

To disable checking, set ``disabled`` to ``true`` or simply run:

|

||||

|

||||

.. code-block:: console

|

||||

|

||||

maigret --self-check --site My.Mail.ru@bk.ru

|

||||

|

||||

To debug the check method using the response HTML, you can run:

|

||||

|

||||

.. code-block:: console

|

||||

|

||||

maigret soxoj --site My.Mail.ru@bk.ru -d 2> response.txt

|

||||

|

||||

There are few options for sites data.json helpful in various cases:

|

||||

|

||||

- ``engine`` - a predefined check for the sites of certain type (e.g. forums), see the ``engines`` section in the JSON file

|

||||

- ``headers`` - a dictionary of additional headers to be sent to the site

|

||||

- ``requestHeadOnly`` - set to ``true`` if it's enough to make a HEAD request to the site

|

||||

- ``regexCheck`` - a regex to check if the username is valid, in case of frequent false-positives

|

||||

|

||||

How to publish new version of Maigret

|

||||

-------------------------------------

|

||||

@@ -145,7 +69,7 @@ PyPi package.

|

||||

4. Get auto-generate release notes:

|

||||

|

||||

- Open https://github.com/soxoj/maigret/releases/new

|

||||

- Click `Choose a tag`, enter `v0.4.0` (your version)

|

||||

- Click `Choose a tag`, enter `test`

|

||||

- Click `Create new tag`

|

||||

- Press `+ Auto-generate release notes`

|

||||

- Copy all the text from description text field below

|

||||

@@ -157,8 +81,8 @@ PyPi package.

|

||||

|

||||

.. code-block:: console

|

||||

|

||||

git add -p

|

||||

git commit -m 'Bump to YOUR VERSION'

|

||||

git add ...

|

||||

git commit -m 'Bump to 0.4.0'

|

||||

git push origin head

|

||||

|

||||

|

||||

@@ -174,27 +98,4 @@ PyPi package.

|

||||

- Press `+ Auto-generate release notes`

|

||||

- **Press "Publish release" button**

|

||||

|

||||

8. That's all, now you can simply wait push to PyPi. You can monitor it in Action page: https://github.com/soxoj/maigret/actions/workflows/python-publish.yml

|

||||

|

||||

Documentation updates

|

||||

---------------------

|

||||

|

||||

Documentations is auto-generated and auto-deployed from the ``docs`` directory.

|

||||

|

||||

To manually update documentation:

|

||||

|

||||

1. Change something in the ``.rst`` files in the ``docs/source`` directory.

|

||||

2. Install ``pip install -r requirements.txt`` in the docs directory.

|

||||

3. Run ``make singlehtml`` in the terminal in the docs directory.

|

||||

4. Open ``build/singlehtml/index.html`` in your browser to see the result.

|

||||

5. If everything is ok, commit and push your changes to GitHub.

|

||||

|

||||

Roadmap

|

||||

-------

|

||||

|

||||

.. warning::

|

||||

This roadmap requires updating to reflect the current project status and future plans.

|

||||

|

||||

.. figure:: https://i.imgur.com/kk8cFdR.png

|

||||

:target: https://i.imgur.com/kk8cFdR.png

|

||||

:align: center

|

||||

8. That's all, now you can simply wait push to PyPi. You can monitor it in Action page: https://github.com/soxoj/maigret/actions/workflows/python-publish.yml

|

||||

@@ -0,0 +1,35 @@

|

||||

.. _extracting-information-from-pages:

|

||||

|

||||

Extracting information from pages

|

||||

=================================

|

||||

Maigret can parse URLs and content of web pages by URLs to extract info about account owner and other meta information.

|

||||

|

||||

You must specify the URL with the option ``--parse``, it's can be a link to an account or an online document. List of supported sites `see here <https://github.com/soxoj/socid-extractor#sites>`_.

|

||||

|

||||

After the end of the parsing phase, Maigret will start the search phase by :doc:`supported identifiers <supported-identifier-types>` found (usernames, ids, etc.).

|

||||

|

||||

Examples

|

||||

--------

|

||||

.. code-block:: console

|

||||

|

||||

$ maigret --parse https://docs.google.com/spreadsheets/d/1HtZKMLRXNsZ0HjtBmo0Gi03nUPiJIA4CC4jTYbCAnXw/edit\#gid\=0

|

||||

|

||||

Scanning webpage by URL https://docs.google.com/spreadsheets/d/1HtZKMLRXNsZ0HjtBmo0Gi03nUPiJIA4CC4jTYbCAnXw/edit#gid=0...

|

||||

┣╸org_name: Gooten

|

||||

┗╸mime_type: application/vnd.google-apps.ritz

|

||||

Scanning webpage by URL https://clients6.google.com/drive/v2beta/files/1HtZKMLRXNsZ0HjtBmo0Gi03nUPiJIA4CC4jTYbCAnXw?fields=alternateLink%2CcopyRequiresWriterPermission%2CcreatedDate%2Cdescription%2CdriveId%2CfileSize%2CiconLink%2Cid%2Clabels(starred%2C%20trashed)%2ClastViewedByMeDate%2CmodifiedDate%2Cshared%2CteamDriveId%2CuserPermission(id%2Cname%2CemailAddress%2Cdomain%2Crole%2CadditionalRoles%2CphotoLink%2Ctype%2CwithLink)%2Cpermissions(id%2Cname%2CemailAddress%2Cdomain%2Crole%2CadditionalRoles%2CphotoLink%2Ctype%2CwithLink)%2Cparents(id)%2Ccapabilities(canMoveItemWithinDrive%2CcanMoveItemOutOfDrive%2CcanMoveItemOutOfTeamDrive%2CcanAddChildren%2CcanEdit%2CcanDownload%2CcanComment%2CcanMoveChildrenWithinDrive%2CcanRename%2CcanRemoveChildren%2CcanMoveItemIntoTeamDrive)%2Ckind&supportsTeamDrives=true&enforceSingleParent=true&key=AIzaSyC1eQ1xj69IdTMeii5r7brs3R90eck-m7k...

|

||||

┣╸created_at: 2016-02-16T18:51:52.021Z

|

||||

┣╸updated_at: 2019-10-23T17:15:47.157Z

|

||||

┣╸gaia_id: 15696155517366416778

|

||||

┣╸fullname: Nadia Burgess

|

||||

┣╸email: nadia@gooten.com

|

||||

┣╸image: https://lh3.googleusercontent.com/a-/AOh14GheZe1CyNa3NeJInWAl70qkip4oJ7qLsD8vDy6X=s64

|

||||

┗╸email_username: nadia

|

||||

|

||||

.. code-block:: console

|

||||

|

||||

$ maigret.py --parse https://steamcommunity.com/profiles/76561199113454789

|

||||

Scanning webpage by URL https://steamcommunity.com/profiles/76561199113454789...

|

||||

┣╸steam_id: 76561199113454789

|

||||

┣╸nickname: Pok

|

||||

┗╸username: Machine42

|

||||

+3

-124

@@ -14,99 +14,17 @@ Also, Maigret use found ids and usernames from links to start a recursive search

|

||||

|

||||

Enabled by default, can be disabled with ``--no extracting``.

|

||||

|

||||

.. code-block:: text

|

||||

|

||||

$ python3 -m maigret soxoj --timeout 5

|

||||

[-] Starting a search on top 500 sites from the Maigret database...

|

||||

[!] You can run search by full list of sites with flag `-a`

|

||||

[*] Checking username soxoj on:

|

||||

...

|

||||

[+] GitHub: https://github.com/soxoj

|

||||

├─uid: 31013580

|

||||

├─image: https://avatars.githubusercontent.com/u/31013580?v=4

|

||||

├─created_at: 2017-08-14T17:03:07Z

|

||||

├─location: Amsterdam, Netherlands

|

||||

├─follower_count: 1304

|

||||

├─following_count: 54

|

||||

├─fullname: Soxoj

|

||||

├─public_gists_count: 3

|

||||

├─public_repos_count: 88

|

||||

├─twitter_username: sox0j

|

||||

├─bio: Head of OSINT Center of Excellence in @SocialLinks-IO

|

||||

├─is_company: Social Links

|

||||

└─blog_url: soxoj.com

|

||||

...

|

||||

|

||||

Recursive search

|

||||

----------------

|

||||

|

||||

Maigret has the ability to scan account pages for :ref:`common identifiers <supported-identifier-types>` and usernames found in links.

|

||||

When people include links to their other social media accounts, Maigret can automatically detect and initiate new searches for those profiles.

|

||||

Any information discovered through this process will be shown in both the command-line interface output and generated reports.

|

||||

Maigret can extract some :ref:`common ids <supported-identifier-types>` and usernames from links on the account page (often people placed links to their other accounts) and immediately start new searches. All the gathered information will be displayed in CLI output and reports.

|

||||

|

||||

Enabled by default, can be disabled with ``--no-recursion``.

|

||||

|

||||

|

||||

.. code-block:: text

|

||||

|

||||

$ python3 -m maigret soxoj --timeout 5

|

||||

[-] Starting a search on top 500 sites from the Maigret database...

|

||||

[!] You can run search by full list of sites with flag `-a`

|

||||

[*] Checking username soxoj on:

|

||||

...

|

||||

[+] GitHub: https://github.com/soxoj

|

||||

├─uid: 31013580

|

||||

├─image: https://avatars.githubusercontent.com/u/31013580?v=4

|

||||

├─created_at: 2017-08-14T17:03:07Z

|

||||

├─location: Amsterdam, Netherlands

|

||||

├─follower_count: 1304

|

||||

├─following_count: 54

|

||||

├─fullname: Soxoj

|

||||

├─public_gists_count: 3

|

||||

├─public_repos_count: 88

|

||||

├─twitter_username: sox0j <===== another username found here

|

||||

├─bio: Head of OSINT Center of Excellence in @SocialLinks-IO

|

||||

├─is_company: Social Links

|

||||

└─blog_url: soxoj.com

|

||||

...

|

||||

Searching |████████████████████████████████████████| 500/500 [100%] in 9.1s (54.85/s)

|

||||

[-] You can see detailed site check errors with a flag `--print-errors`

|

||||

[*] Checking username sox0j on:

|

||||

[+] Telegram: https://t.me/sox0j

|

||||

├─fullname: @Sox0j

|

||||

...

|

||||

|

||||

Username permutations

|

||||

---------------------

|

||||

|

||||

Maigret can generate permutations of usernames. Just pass a few usernames in the CLI and use ``--permute`` flag.

|

||||

Thanks to `@balestek <https://github.com/balestek>`_ for the idea and implementation.

|

||||

|

||||

.. code-block:: text

|

||||

|

||||

$ python3 -m maigret --permute hope dream --timeout 5

|

||||

[-] 12 permutations from hope dream to check...

|

||||

├─ hopedream

|

||||

├─ _hopedream

|

||||

├─ hopedream_

|

||||

├─ hope_dream

|

||||

├─ hope-dream

|

||||

├─ hope.dream

|

||||

├─ dreamhope

|

||||

├─ _dreamhope

|

||||

├─ dreamhope_

|

||||

├─ dream_hope

|

||||

├─ dream-hope

|

||||

└─ dream.hope

|

||||

[-] Starting a search on top 500 sites from the Maigret database...

|

||||

[!] You can run search by full list of sites with flag `-a`

|

||||

[*] Checking username hopedream on:

|

||||

...

|

||||

|

||||

Reports

|

||||

Reports

|

||||

-------

|

||||

|

||||

Maigret currently supports HTML, PDF, TXT, XMind 8 mindmap, and JSON reports.

|

||||

Maigret currently supports HTML, PDF, TXT, XMind mindmap, and JSON reports.

|

||||

|

||||

HTML/PDF reports contain:

|

||||

|

||||

@@ -116,9 +34,6 @@ HTML/PDF reports contain:

|

||||

|

||||

Also, there is a short text report in the CLI output after the end of a searching phase.

|

||||

|

||||

.. warning::

|

||||

XMind 8 mindmaps are incompatible with XMind 2022!

|

||||

|

||||

Tags

|

||||

----

|

||||

|

||||

@@ -153,42 +68,6 @@ The Maigret database contains not only the original websites, but also mirrors,

|

||||

|

||||

It allows getting additional info about the person and checking the existence of the account even if the main site is unavailable (bot protection, captcha, etc.)

|

||||

|

||||

.. _extracting-information-from-pages:

|

||||

|

||||

Extractiion of information from account pages

|

||||

---------------------------------------------

|

||||

|

||||

Maigret can parse URLs and content of web pages by URLs to extract info about account owner and other meta information.

|

||||

|

||||

You must specify the URL with the option ``--parse``, it's can be a link to an account or an online document. List of supported sites `see here <https://github.com/soxoj/socid-extractor#sites>`_.

|

||||

|

||||

After the end of the parsing phase, Maigret will start the search phase by :doc:`supported identifiers <supported-identifier-types>` found (usernames, ids, etc.).

|

||||

|

||||

.. code-block:: console

|

||||

|

||||

$ maigret --parse https://docs.google.com/spreadsheets/d/1HtZKMLRXNsZ0HjtBmo0Gi03nUPiJIA4CC4jTYbCAnXw/edit\#gid\=0

|

||||

|

||||

Scanning webpage by URL https://docs.google.com/spreadsheets/d/1HtZKMLRXNsZ0HjtBmo0Gi03nUPiJIA4CC4jTYbCAnXw/edit#gid=0...

|

||||

┣╸org_name: Gooten

|

||||

┗╸mime_type: application/vnd.google-apps.ritz

|

||||

Scanning webpage by URL https://clients6.google.com/drive/v2beta/files/1HtZKMLRXNsZ0HjtBmo0Gi03nUPiJIA4CC4jTYbCAnXw?fields=alternateLink%2CcopyRequiresWriterPermission%2CcreatedDate%2Cdescription%2CdriveId%2CfileSize%2CiconLink%2Cid%2Clabels(starred%2C%20trashed)%2ClastViewedByMeDate%2CmodifiedDate%2Cshared%2CteamDriveId%2CuserPermission(id%2Cname%2CemailAddress%2Cdomain%2Crole%2CadditionalRoles%2CphotoLink%2Ctype%2CwithLink)%2Cpermissions(id%2Cname%2CemailAddress%2Cdomain%2Crole%2CadditionalRoles%2CphotoLink%2Ctype%2CwithLink)%2Cparents(id)%2Ccapabilities(canMoveItemWithinDrive%2CcanMoveItemOutOfDrive%2CcanMoveItemOutOfTeamDrive%2CcanAddChildren%2CcanEdit%2CcanDownload%2CcanComment%2CcanMoveChildrenWithinDrive%2CcanRename%2CcanRemoveChildren%2CcanMoveItemIntoTeamDrive)%2Ckind&supportsTeamDrives=true&enforceSingleParent=true&key=AIzaSyC1eQ1xj69IdTMeii5r7brs3R90eck-m7k...

|

||||

┣╸created_at: 2016-02-16T18:51:52.021Z

|

||||

┣╸updated_at: 2019-10-23T17:15:47.157Z

|

||||

┣╸gaia_id: 15696155517366416778

|

||||

┣╸fullname: Nadia Burgess

|

||||

┣╸email: nadia@gooten.com

|

||||

┣╸image: https://lh3.googleusercontent.com/a-/AOh14GheZe1CyNa3NeJInWAl70qkip4oJ7qLsD8vDy6X=s64

|

||||

┗╸email_username: nadia

|

||||

|

||||

.. code-block:: console

|

||||

|

||||

$ maigret.py --parse https://steamcommunity.com/profiles/76561199113454789

|

||||

Scanning webpage by URL https://steamcommunity.com/profiles/76561199113454789...

|

||||

┣╸steam_id: 76561199113454789

|

||||

┣╸nickname: Pok

|

||||

┗╸username: Machine42

|

||||

|

||||

|

||||

Simple API

|

||||

----------

|

||||

|

||||

|

||||

+7

-22

@@ -3,44 +3,29 @@

|

||||

Welcome to the Maigret docs!

|

||||

============================

|

||||

|

||||

**Maigret** is an easy-to-use and powerful OSINT tool for collecting a dossier on a person by a username (alias) only.

|

||||

**Maigret** is an easy-to-use and powerful OSINT tool for collecting a dossier on a person by username only.

|

||||

|

||||

This is achieved by checking for accounts on a huge number of sites and gathering all the available information from web pages.

|

||||

|

||||

The project's main goal — give to OSINT researchers and pentesters a **universal tool** to get maximum information

|

||||

about a person of interest by a username and integrate it with other tools in automatization pipelines.

|

||||

|

||||

.. warning::

|

||||

**This tool is intended for educational and lawful purposes only.**

|

||||

The developers do not endorse or encourage any illegal activities or misuse of this tool.

|

||||

Regulations regarding the collection and use of personal data vary by country and region,

|

||||

including but not limited to GDPR in the EU, CCPA in the USA, and similar laws worldwide.

|

||||

|

||||

It is your sole responsibility to ensure that your use of this tool complies with all applicable laws

|

||||

and regulations in your jurisdiction. Any illegal use of this tool is strictly prohibited,

|

||||

and you are fully accountable for your actions.

|

||||

|

||||

The authors and developers of this tool bear no responsibility for any misuse

|

||||

or unlawful activities conducted by its users.

|

||||

The project's main goal - give to OSINT researchers and pentesters a **universal tool** to get maximum information about a subject and integrate it with other tools in automatization pipelines.

|

||||

|

||||

You may be interested in:

|

||||

-------------------------

|

||||

- :doc:`Quick start <quick-start>`

|

||||

- :doc:`Usage examples <usage-examples>`

|

||||

- :doc:`Command line options <command-line-options>`

|

||||

- :doc:`Command line options description <command-line-options>` and :doc:`usage examples <usage-examples>`

|

||||

- :doc:`Features list <features>`

|

||||

- :doc:`Project roadmap <roadmap>`

|

||||

|

||||

.. toctree::

|

||||

:hidden:

|

||||

:caption: Sections

|

||||

|

||||

quick-start

|

||||

installation

|

||||

usage-examples

|

||||

command-line-options

|

||||

extracting-information-from-pages

|

||||

features

|

||||

philosophy

|

||||

roadmap

|

||||

supported-identifier-types

|

||||

tags

|

||||

usage-examples

|

||||

settings

|

||||

development

|

||||

|

||||

@@ -1,88 +0,0 @@

|

||||

.. _installation:

|

||||

|

||||

Installation

|

||||

============

|

||||

|

||||

Maigret can be installed using pip, Docker, or simply can be launched from the cloned repo.

|

||||

Also, it is available online via `official Telegram bot <https://t.me/osint_maigret_bot>`_,

|

||||

source code of a bot is `available on GitHub <https://github.com/soxoj/maigret-tg-bot>`_.

|

||||

|

||||

Package installing

|

||||

------------------

|

||||

|

||||

Please note that the sites database in the PyPI package may be outdated.

|

||||

If you encounter frequent false positive results, we recommend installing the latest development version from GitHub instead.

|

||||

|

||||

.. note::

|

||||

Python 3.10 or higher and pip is required, **Python 3.11 is recommended.**

|

||||

|

||||

.. code-block:: bash

|

||||

|

||||

# install from pypi

|

||||

pip3 install maigret

|

||||

|

||||

# usage

|

||||

maigret username

|

||||

|

||||

Development version (GitHub)

|

||||

----------------------------

|

||||

|

||||

.. code-block:: bash

|

||||

|

||||

git clone https://github.com/soxoj/maigret && cd maigret

|

||||

pip3 install .

|

||||

|

||||

# OR

|

||||

pip3 install git+https://github.com/soxoj/maigret.git

|

||||

|

||||

# usage

|

||||

maigret username

|

||||

|

||||

# OR use poetry in case you plan to develop Maigret

|

||||

pip3 install poetry

|

||||

poetry run maigret

|

||||

|

||||

Cloud shells and Jupyter notebooks

|

||||

----------------------------------

|

||||

|

||||

In case you don't want to install Maigret locally, you can use cloud shells and Jupyter notebooks.

|

||||

|

||||

.. image:: https://user-images.githubusercontent.com/27065646/92304704-8d146d80-ef80-11ea-8c29-0deaabb1c702.png

|

||||

:target: https://console.cloud.google.com/cloudshell/open?git_repo=https://github.com/soxoj/maigret&tutorial=README.md

|

||||

:alt: Open in Cloud Shell

|

||||

|

||||

.. image:: https://replit.com/badge/github/soxoj/maigret

|

||||

:target: https://repl.it/github/soxoj/maigret

|

||||

:alt: Run on Replit

|

||||

:height: 50

|

||||

|

||||

.. image:: https://colab.research.google.com/assets/colab-badge.svg

|

||||

:target: https://colab.research.google.com/gist/soxoj/879b51bc3b2f8b695abb054090645000/maigret-collab.ipynb

|

||||

:alt: Open In Colab

|

||||

:height: 45

|

||||

|

||||

.. image:: https://mybinder.org/badge_logo.svg

|

||||

:target: https://mybinder.org/v2/gist/soxoj/9d65c2f4d3bec5dd25949197ea73cf3a/HEAD

|

||||

:alt: Open In Binder

|

||||

:height: 45

|

||||

|

||||

Windows standalone EXE-binaries

|

||||

-------------------------------

|

||||

|

||||

Standalone EXE-binaries for Windows are located in the `Releases section <https://github.com/soxoj/maigret/releases>`_ of GitHub repository.

|

||||

|

||||

Currently, the new binary is created automatically after each commit to the main branch, but is not deployed to the Releases section automatically.

|

||||

|

||||

Docker

|

||||

------

|

||||

|

||||

.. code-block:: bash

|

||||

|

||||

# official image of the development version, updated from the github repo

|

||||

docker pull soxoj/maigret

|

||||

|

||||

# usage

|

||||

docker run -v /mydir:/app/reports soxoj/maigret:latest username --html

|

||||

|

||||

# manual build

|

||||

docker build -t maigret .

|

||||

Binary file not shown.

|

Before Width: | Height: | Size: 375 KiB |

@@ -3,15 +3,4 @@

|

||||

Philosophy

|

||||

==========

|

||||

|

||||

TL;DR: Username => Dossier

|

||||

|

||||

Maigret is designed to gather all the available information about person by his username.

|

||||

|

||||

What kind of information is this? First, links to person accounts. Secondly, all the machine-extractable

|

||||

pieces of info, such as: other usernames, full name, URLs to people's images, birthday, location (country,

|

||||

city, etc.), gender.

|

||||

|

||||

All this information forms some dossier, but it also useful for other tools and analytical purposes.

|

||||

Each collected piece of data has a label of a certain format (for example, ``follower_count`` for the number

|

||||

of subscribers or ``created_at`` for account creation time) so that it can be parsed and analyzed by various

|

||||

systems and stored in databases.

|

||||

Username => Dossier

|

||||

|

||||

@@ -1,15 +0,0 @@

|

||||

.. _quick-start:

|

||||

|

||||

Quick start

|

||||

===========

|

||||

|

||||

After :doc:`installing Maigret <installation>`, you can begin searching by providing one or more usernames to look up:

|

||||

|

||||