mirror of

https://github.com/soxoj/maigret.git

synced 2026-05-07 23:27:43 +00:00

Compare commits

54 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

| 37854a867b | |||

| 6480eebbdf | |||

| aad862b2ed | |||

| c6d0f332bd | |||

| f1c006159e | |||

| 69a09fcd94 | |||

| 9f948928e6 | |||

| a3034c11ff | |||

| d47c72b972 | |||

| 8062ec30e9 | |||

| 32000a1cfd | |||

| 8af6ce3af5 | |||

| 0dd1dd5d76 | |||

| 4aab21046b | |||

| 92ac9ec8b7 | |||

| ca2c8b3502 | |||

| 4362a41fca | |||

| c7977f1cdf | |||

| 49708da980 | |||

| bc1398061f | |||

| e8634c8c56 | |||

| dc59b93f38 | |||

| c727cbae27 | |||

| e6c6cc8f6d | |||

| c80e8b1207 | |||

| 6e78fdeb81 | |||

| 9c22e09808 | |||

| f057fd3a68 | |||

| 9b0acc092a | |||

| e6b4cdfa77 | |||

| eb721dc7e3 | |||

| eba0c4531c | |||

| b4a26c03fe | |||

| 9b7f36dc24 | |||

| 05167ad30c | |||

| cee6f0aa43 | |||

| 02cf330e37 | |||

| 5c8f7a3af0 | |||

| 13e1b6f4d1 | |||

| 5179cb56eb | |||

| 1a2c7e944a | |||

| f7eae046a1 | |||

| bdff08cb70 | |||

| a468cb1cd3 | |||

| 0fe933e8a1 | |||

| 5c3de91181 | |||

| 3356463102 | |||

| 7ac03cf5ca | |||

| 4aeacef07d | |||

| 8de1830cf3 | |||

| ba6169659e | |||

| 4a5c5c3f07 | |||

| 4ba7fcb1ff | |||

| a76f95858f |

@@ -0,0 +1,13 @@

|

||||

---

|

||||

name: Add a site

|

||||

about: I want to add a new site for Maigret checks

|

||||

title: New site

|

||||

labels: new-site

|

||||

assignees: soxoj

|

||||

|

||||

---

|

||||

|

||||

Link to the site main page: https://example.com

|

||||

Link to an existing account: https://example.com/users/john

|

||||

Link to a nonexistent account: https://example.com/users/noonewouldeverusethis7

|

||||

Tags: photo, us, ...

|

||||

@@ -2,6 +2,16 @@

|

||||

|

||||

## [Unreleased]

|

||||

|

||||

## [0.3.1] - 2021-10-31

|

||||

* fixed false positives

|

||||

* accelerated maigret start time by 3 times

|

||||

|

||||

## [0.3.0] - 2021-06-02

|

||||

* added support of Tor and I2P sites

|

||||

* added experimental DNS checking feature

|

||||

* implemented sorting by data points for reports

|

||||

* reports fixes

|

||||

|

||||

## [0.2.4] - 2021-05-18

|

||||

* cli output report

|

||||

* various improvements

|

||||

|

||||

@@ -0,0 +1,128 @@

|

||||

# Contributor Covenant Code of Conduct

|

||||

|

||||

## Our Pledge

|

||||

|

||||

We as members, contributors, and leaders pledge to make participation in our

|

||||

community a harassment-free experience for everyone, regardless of age, body

|

||||

size, visible or invisible disability, ethnicity, sex characteristics, gender

|

||||

identity and expression, level of experience, education, socio-economic status,

|

||||

nationality, personal appearance, race, religion, or sexual identity

|

||||

and orientation.

|

||||

|

||||

We pledge to act and interact in ways that contribute to an open, welcoming,

|

||||

diverse, inclusive, and healthy community.

|

||||

|

||||

## Our Standards

|

||||

|

||||

Examples of behavior that contributes to a positive environment for our

|

||||

community include:

|

||||

|

||||

* Demonstrating empathy and kindness toward other people

|

||||

* Being respectful of differing opinions, viewpoints, and experiences

|

||||

* Giving and gracefully accepting constructive feedback

|

||||

* Accepting responsibility and apologizing to those affected by our mistakes,

|

||||

and learning from the experience

|

||||

* Focusing on what is best not just for us as individuals, but for the

|

||||

overall community

|

||||

|

||||

Examples of unacceptable behavior include:

|

||||

|

||||

* The use of sexualized language or imagery, and sexual attention or

|

||||

advances of any kind

|

||||

* Trolling, insulting or derogatory comments, and personal or political attacks

|

||||

* Public or private harassment

|

||||

* Publishing others' private information, such as a physical or email

|

||||

address, without their explicit permission

|

||||

* Other conduct which could reasonably be considered inappropriate in a

|

||||

professional setting

|

||||

|

||||

## Enforcement Responsibilities

|

||||

|

||||

Community leaders are responsible for clarifying and enforcing our standards of

|

||||

acceptable behavior and will take appropriate and fair corrective action in

|

||||

response to any behavior that they deem inappropriate, threatening, offensive,

|

||||

or harmful.

|

||||

|

||||

Community leaders have the right and responsibility to remove, edit, or reject

|

||||

comments, commits, code, wiki edits, issues, and other contributions that are

|

||||

not aligned to this Code of Conduct, and will communicate reasons for moderation

|

||||

decisions when appropriate.

|

||||

|

||||

## Scope

|

||||

|

||||

This Code of Conduct applies within all community spaces, and also applies when

|

||||

an individual is officially representing the community in public spaces.

|

||||

Examples of representing our community include using an official e-mail address,

|

||||

posting via an official social media account, or acting as an appointed

|

||||

representative at an online or offline event.

|

||||

|

||||

## Enforcement

|

||||

|

||||

Instances of abusive, harassing, or otherwise unacceptable behavior may be

|

||||

reported to the community leaders responsible for enforcement at

|

||||

https://t.me/soxoj.

|

||||

All complaints will be reviewed and investigated promptly and fairly.

|

||||

|

||||

All community leaders are obligated to respect the privacy and security of the

|

||||

reporter of any incident.

|

||||

|

||||

## Enforcement Guidelines

|

||||

|

||||

Community leaders will follow these Community Impact Guidelines in determining

|

||||

the consequences for any action they deem in violation of this Code of Conduct:

|

||||

|

||||

### 1. Correction

|

||||

|

||||

**Community Impact**: Use of inappropriate language or other behavior deemed

|

||||

unprofessional or unwelcome in the community.

|

||||

|

||||

**Consequence**: A private, written warning from community leaders, providing

|

||||

clarity around the nature of the violation and an explanation of why the

|

||||

behavior was inappropriate. A public apology may be requested.

|

||||

|

||||

### 2. Warning

|

||||

|

||||

**Community Impact**: A violation through a single incident or series

|

||||

of actions.

|

||||

|

||||

**Consequence**: A warning with consequences for continued behavior. No

|

||||

interaction with the people involved, including unsolicited interaction with

|

||||

those enforcing the Code of Conduct, for a specified period of time. This

|

||||

includes avoiding interactions in community spaces as well as external channels

|

||||

like social media. Violating these terms may lead to a temporary or

|

||||

permanent ban.

|

||||

|

||||

### 3. Temporary Ban

|

||||

|

||||

**Community Impact**: A serious violation of community standards, including

|

||||

sustained inappropriate behavior.

|

||||

|

||||

**Consequence**: A temporary ban from any sort of interaction or public

|

||||

communication with the community for a specified period of time. No public or

|

||||

private interaction with the people involved, including unsolicited interaction

|

||||

with those enforcing the Code of Conduct, is allowed during this period.

|

||||

Violating these terms may lead to a permanent ban.

|

||||

|

||||

### 4. Permanent Ban

|

||||

|

||||

**Community Impact**: Demonstrating a pattern of violation of community

|

||||

standards, including sustained inappropriate behavior, harassment of an

|

||||

individual, or aggression toward or disparagement of classes of individuals.

|

||||

|

||||

**Consequence**: A permanent ban from any sort of public interaction within

|

||||

the community.

|

||||

|

||||

## Attribution

|

||||

|

||||

This Code of Conduct is adapted from the [Contributor Covenant][homepage],

|

||||

version 2.0, available at

|

||||

https://www.contributor-covenant.org/version/2/0/code_of_conduct.html.

|

||||

|

||||

Community Impact Guidelines were inspired by [Mozilla's code of conduct

|

||||

enforcement ladder](https://github.com/mozilla/diversity).

|

||||

|

||||

[homepage]: https://www.contributor-covenant.org

|

||||

|

||||

For answers to common questions about this code of conduct, see the FAQ at

|

||||

https://www.contributor-covenant.org/faq. Translations are available at

|

||||

https://www.contributor-covenant.org/translations.

|

||||

@@ -0,0 +1,30 @@

|

||||

# How to contribute

|

||||

|

||||

Hey! I'm really glad you're reading this. Maigret contains a lot of sites, and it is very hard to keep all the sites operational. That's why any fix is important.

|

||||

|

||||

## How to add a new site

|

||||

|

||||

#### Beginner level

|

||||

|

||||

You can use Maigret **submit mode** (`maigret --submit URL`) to add a new site or update an existing site. In this mode Maigret do an automatic analysis of the given account URL or site main page URL to determine the site engine and methods to check account presence. After checking Maigret asks if you want to add the site, answering y/Y will rewrite the local database.

|

||||

|

||||

#### Advanced level

|

||||

|

||||

You can edit [the database JSON file](https://github.com/soxoj/maigret/blob/main/maigret/resources/data.json) (`./maigret/resources/data.json`) manually.

|

||||

|

||||

## Testing

|

||||

|

||||

There are CI checks for every PR to the Maigret repository. But it will be better to run `make format`, `make link` and `make test` to ensure you've made a corrent changes.

|

||||

|

||||

## Submitting changes

|

||||

|

||||

To submit you changes you must [send a GitHub PR](https://github.com/soxoj/maigret/pulls) to the Maigret project.

|

||||

Always write a clear log message for your commits. One-line messages are fine for small changes, but bigger changes should look like this:

|

||||

|

||||

$ git commit -m "A brief summary of the commit

|

||||

>

|

||||

> A paragraph describing what changed and its impact."

|

||||

|

||||

## Coding conventions

|

||||

|

||||

Start reading the code and you'll get the hang of it. ;)

|

||||

+8

-17

@@ -1,25 +1,16 @@

|

||||

FROM python:3.7

|

||||

LABEL maintainer="Soxoj <soxoj@protonmail.com>"

|

||||

|

||||

FROM python:3.9

|

||||

MAINTAINER Soxoj <soxoj@protonmail.com>

|

||||

WORKDIR /app

|

||||

|

||||

ADD requirements.txt .

|

||||

|

||||

RUN pip install --upgrade pip

|

||||

|

||||

RUN apt update -y

|

||||

|

||||

RUN apt install -y\

|

||||

RUN apt update && \

|

||||

apt install -y \

|

||||

gcc \

|

||||

musl-dev \

|

||||

libxml2 \

|

||||

libxml2-dev \

|

||||

libxslt-dev \

|

||||

&& YARL_NO_EXTENSIONS=1 python3 -m pip install maigret \

|

||||

&& rm -rf /var/cache/apk/* \

|

||||

/tmp/* \

|

||||

/var/tmp/*

|

||||

|

||||

libxslt-dev

|

||||

RUN apt clean \

|

||||

&& rm -rf /var/lib/apt/lists/* /tmp/*

|

||||

ADD . .

|

||||

|

||||

RUN YARL_NO_EXTENSIONS=1 python3 -m pip install .

|

||||

ENTRYPOINT ["maigret"]

|

||||

|

||||

@@ -0,0 +1,35 @@

|

||||

LINT_FILES=maigret wizard.py tests

|

||||

|

||||

test:

|

||||

coverage run --source=./maigret -m pytest tests

|

||||

coverage report -m

|

||||

coverage html

|

||||

|

||||

rerun-tests:

|

||||

pytest --lf -vv

|

||||

|

||||

lint:

|

||||

@echo 'syntax errors or undefined names'

|

||||

flake8 --count --select=E9,F63,F7,F82 --show-source --statistics ${LINT_FILES} maigret.py

|

||||

|

||||

@echo 'warning'

|

||||

flake8 --count --exit-zero --max-complexity=10 --max-line-length=127 --statistics --ignore=E731,W503 ${LINT_FILES} maigret.py

|

||||

|

||||

@echo 'mypy'

|

||||

mypy ${LINT_FILES}

|

||||

|

||||

format:

|

||||

@echo 'black'

|

||||

black --skip-string-normalization ${LINT_FILES}

|

||||

|

||||

pull:

|

||||

git stash

|

||||

git checkout main

|

||||

git pull origin main

|

||||

git stash pop

|

||||

|

||||

clean:

|

||||

rm -rf reports htmcov dist

|

||||

|

||||

install:

|

||||

pip3 install .

|

||||

@@ -8,15 +8,12 @@

|

||||

<a href="https://pypi.org/project/maigret/">

|

||||

<img alt="PyPI - Downloads" src="https://img.shields.io/pypi/dw/maigret?style=flat-square">

|

||||

</a>

|

||||

<a href="https://gitter.im/maigret-osint/community">

|

||||

<img alt="Chat - Gitter" src="./static/chat_gitter.svg" />

|

||||

</a>

|

||||

<a href="https://twitter.com/intent/follow?screen_name=sox0j">

|

||||

<img src="https://img.shields.io/twitter/follow/sox0j?label=Follow%20sox0j&style=social&color=blue" alt="Follow @sox0j" />

|

||||

<a href="https://pypi.org/project/maigret/">

|

||||

<img alt="Views" src="https://komarev.com/ghpvc/?username=maigret&color=brightgreen&label=views&style=flat-square">

|

||||

</a>

|

||||

</p>

|

||||

<p align="center">

|

||||

<img src="./static/maigret.png" height="200"/>

|

||||

<img src="https://raw.githubusercontent.com/soxoj/maigret/main/static/maigret.png" height="200"/>

|

||||

</p>

|

||||

</p>

|

||||

|

||||

@@ -24,9 +21,9 @@

|

||||

|

||||

## About

|

||||

|

||||

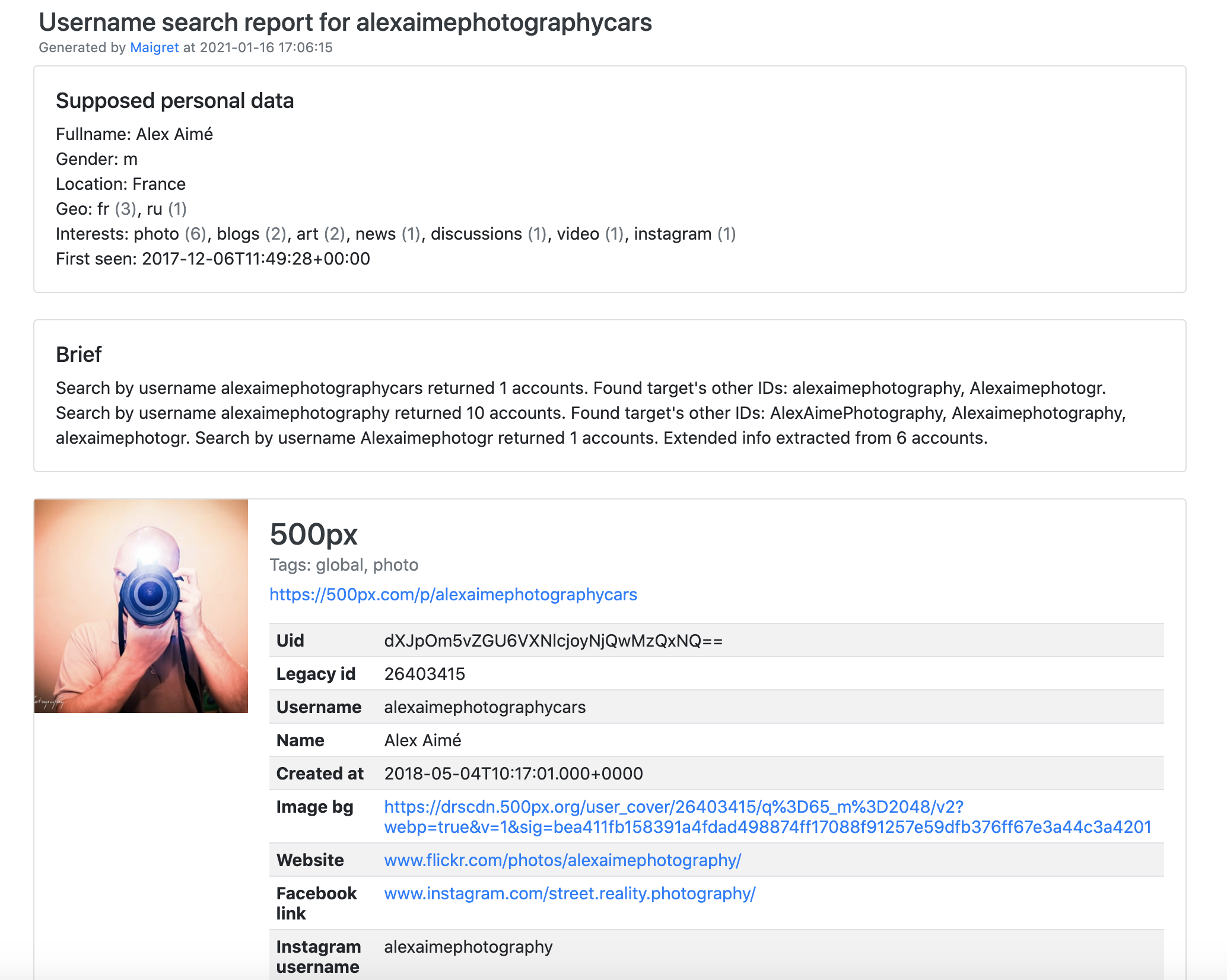

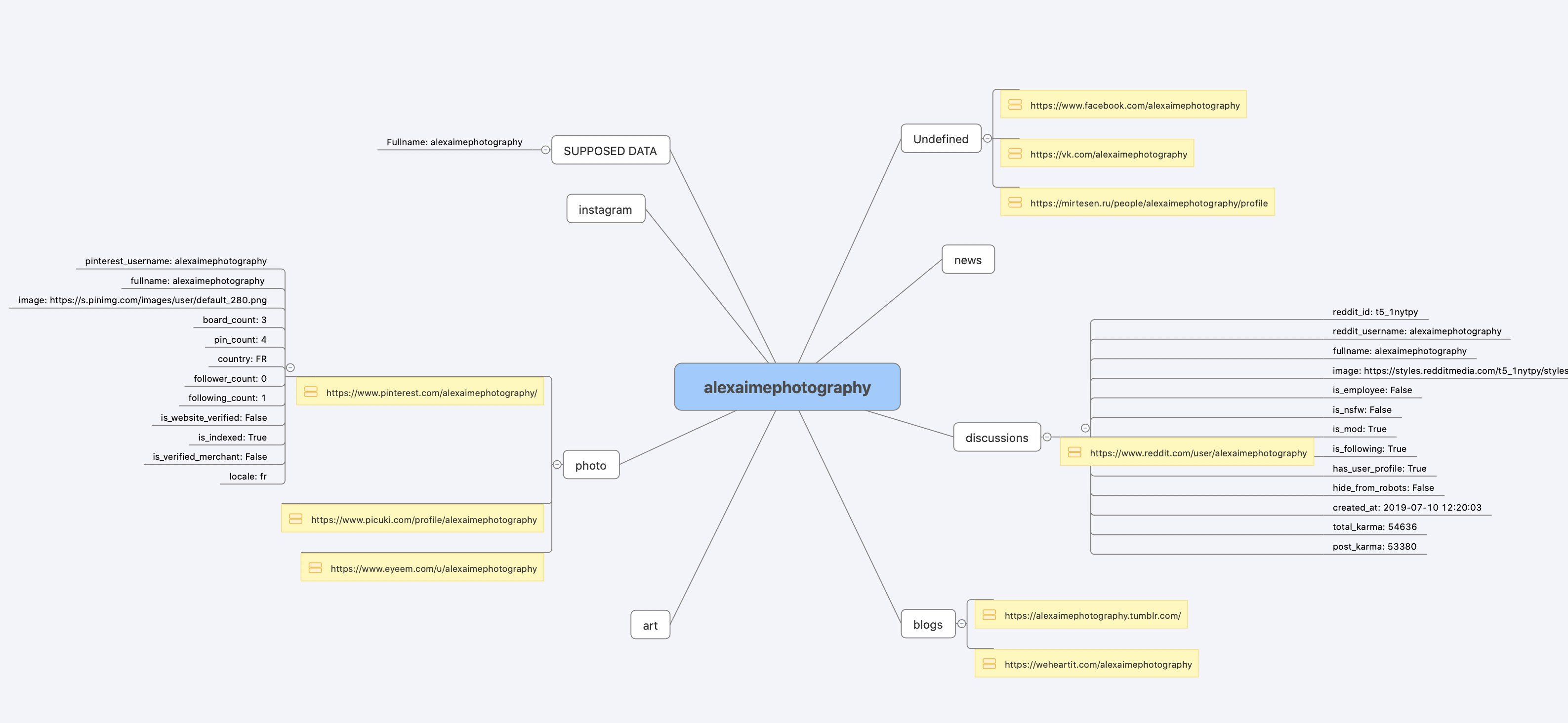

**Maigret** collect a dossier on a person **by username only**, checking for accounts on a huge number of sites and gathering all the available information from web pages. Maigret is an easy-to-use and powerful fork of [Sherlock](https://github.com/sherlock-project/sherlock).

|

||||

**Maigret** collect a dossier on a person **by username only**, checking for accounts on a huge number of sites and gathering all the available information from web pages. No API keys required. Maigret is an easy-to-use and powerful fork of [Sherlock](https://github.com/sherlock-project/sherlock).

|

||||

|

||||

Currently supported more than 2000 sites ([full list](./sites.md)), search is launched against 500 popular sites in descending order of popularity by default.

|

||||

Currently supported more than 2000 sites ([full list](https://raw.githubusercontent.com/soxoj/maigret/main/sites.md)), search is launched against 500 popular sites in descending order of popularity by default. Also supported checking of Tor sites, I2P sites, and domains (via DNS resolving).

|

||||

|

||||

## Main features

|

||||

|

||||

@@ -41,10 +38,13 @@ See full description of Maigret features [in the Wiki](https://github.com/soxoj/

|

||||

## Installation

|

||||

|

||||

Maigret can be installed using pip, Docker, or simply can be launched from the cloned repo.

|

||||

Also you can run Maigret using cloud shells (see buttons below).

|

||||

Also you can run Maigret using cloud shells and Jupyter notebooks (see buttons below).

|

||||

|

||||

[](https://console.cloud.google.com/cloudshell/open?git_repo=https://github.com/soxoj/maigret&tutorial=README.md) [](https://repl.it/github/soxoj/maigret)

|

||||

<a href="https://colab.research.google.com/gist//soxoj/879b51bc3b2f8b695abb054090645000/maigret.ipynb"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab" height="40"></a>

|

||||

[](https://console.cloud.google.com/cloudshell/open?git_repo=https://github.com/soxoj/maigret&tutorial=README.md)

|

||||

<a href="https://repl.it/github/soxoj/maigret"><img src="https://user-images.githubusercontent.com/27065646/92304596-bf719b00-ef7f-11ea-987f-2c1f3c323088.png" alt="Run on Repl.it" height="50"></a>

|

||||

|

||||

<a href="https://colab.research.google.com/gist/soxoj/879b51bc3b2f8b695abb054090645000/maigret-collab.ipynb"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab" height="45"></a>

|

||||

<a href="https://mybinder.org/v2/gist/soxoj/9d65c2f4d3bec5dd25949197ea73cf3a/HEAD"><img src="https://mybinder.org/badge_logo.svg" alt="Open In Binder" height="45"></a>

|

||||

|

||||

### Package installing

|

||||

|

||||

@@ -103,16 +103,16 @@ Use `maigret --help` to get full options description. Also options are documente

|

||||

|

||||

## Demo with page parsing and recursive username search

|

||||

|

||||

[PDF report](./static/report_alexaimephotographycars.pdf), [HTML report](https://htmlpreview.github.io/?https://raw.githubusercontent.com/soxoj/maigret/main/static/report_alexaimephotographycars.html)

|

||||

[PDF report](https://raw.githubusercontent.com/soxoj/maigret/main/static/report_alexaimephotographycars.pdf), [HTML report](https://htmlpreview.github.io/?https://raw.githubusercontent.com/soxoj/maigret/main/static/report_alexaimephotographycars.html)

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

[Full console output](./static/recursive_search.md)

|

||||

[Full console output](https://raw.githubusercontent.com/soxoj/maigret/main/static/recursive_search.md)

|

||||

|

||||

## License

|

||||

|

||||

|

||||

@@ -0,0 +1,68 @@

|

||||

{

|

||||

"cells": [

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "8v6PEfyXb0Gx"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"# clone the repo\n",

|

||||

"!git clone https://github.com/soxoj/maigret\n",

|

||||

"!pip3 install -r maigret/requirements.txt"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "cXOQUAhDchkl"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"# help\n",

|

||||

"!python3 maigret/maigret.py --help"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "SjDmpN4QGnJu"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"# search\n",

|

||||

"!python3 maigret/maigret.py user"

|

||||

]

|

||||

}

|

||||

],

|

||||

"metadata": {

|

||||

"colab": {

|

||||

"collapsed_sections": [],

|

||||

"include_colab_link": true,

|

||||

"name": "maigret.ipynb",

|

||||

"provenance": []

|

||||

},

|

||||

"kernelspec": {

|

||||

"display_name": "Python 3",

|

||||

"language": "python",

|

||||

"name": "python3"

|

||||

},

|

||||

"language_info": {

|

||||

"codemirror_mode": {

|

||||

"name": "ipython",

|

||||

"version": 3

|

||||

},

|

||||

"file_extension": ".py",

|

||||

"mimetype": "text/x-python",

|

||||

"name": "python",

|

||||

"nbconvert_exporter": "python",

|

||||

"pygments_lexer": "ipython3",

|

||||

"version": "3.7.10"

|

||||

}

|

||||

},

|

||||

"nbformat": 4,

|

||||

"nbformat_minor": 1

|

||||

}

|

||||

@@ -1,5 +0,0 @@

|

||||

#!/bin/sh

|

||||

FILES="maigret wizard.py maigret.py tests"

|

||||

|

||||

echo 'black'

|

||||

black --skip-string-normalization $FILES

|

||||

@@ -1,11 +0,0 @@

|

||||

#!/bin/sh

|

||||

FILES="maigret wizard.py maigret.py tests"

|

||||

|

||||

echo 'syntax errors or undefined names'

|

||||

flake8 --count --select=E9,F63,F7,F82 --show-source --statistics $FILES

|

||||

|

||||

echo 'warning'

|

||||

flake8 --count --exit-zero --max-complexity=10 --max-line-length=127 --statistics --ignore=E731,W503 $FILES

|

||||

|

||||

echo 'mypy'

|

||||

mypy ./maigret ./wizard.py ./tests

|

||||

@@ -1,3 +1,3 @@

|

||||

"""Maigret version file"""

|

||||

|

||||

__version__ = '0.2.4'

|

||||

__version__ = '0.3.1'

|

||||

|

||||

@@ -35,7 +35,7 @@ class ParsingActivator:

|

||||

site.headers["authorization"] = f"Bearer {bearer_token}"

|

||||

|

||||

|

||||

async def import_aiohttp_cookies(cookiestxt_filename):

|

||||

def import_aiohttp_cookies(cookiestxt_filename):

|

||||

cookies_obj = MozillaCookieJar(cookiestxt_filename)

|

||||

cookies_obj.load(ignore_discard=True, ignore_expires=True)

|

||||

|

||||

|

||||

+185

-32

@@ -1,6 +1,11 @@

|

||||

import asyncio

|

||||

import logging

|

||||

from mock import Mock

|

||||

|

||||

try:

|

||||

from mock import Mock

|

||||

except ImportError:

|

||||

from unittest.mock import Mock

|

||||

|

||||

import re

|

||||

import ssl

|

||||

import sys

|

||||

@@ -8,11 +13,11 @@ import tqdm

|

||||

from typing import Tuple, Optional, Dict, List

|

||||

from urllib.parse import quote

|

||||

|

||||

import aiohttp

|

||||

import aiodns

|

||||

import tqdm.asyncio

|

||||

from aiohttp_socks import ProxyConnector

|

||||

from python_socks import _errors as proxy_errors

|

||||

from socid_extractor import extract

|

||||

from aiohttp import TCPConnector, ClientSession, http_exceptions

|

||||

from aiohttp.client_exceptions import ServerDisconnectedError, ClientConnectorError

|

||||

|

||||

from .activation import ParsingActivator, import_aiohttp_cookies

|

||||

@@ -30,6 +35,7 @@ from .utils import get_random_user_agent, ascii_data_display

|

||||

|

||||

|

||||

SUPPORTED_IDS = (

|

||||

"username",

|

||||

"yandex_public_id",

|

||||

"gaia_id",

|

||||

"vk_id",

|

||||

@@ -43,13 +49,53 @@ SUPPORTED_IDS = (

|

||||

BAD_CHARS = "#"

|

||||

|

||||

|

||||

async def get_response(request_future, logger) -> Tuple[str, int, Optional[CheckError]]:

|

||||

class CheckerBase:

|

||||

pass

|

||||

|

||||

|

||||

class SimpleAiohttpChecker(CheckerBase):

|

||||

def __init__(self, *args, **kwargs):

|

||||

proxy = kwargs.get('proxy')

|

||||

cookie_jar = kwargs.get('cookie_jar')

|

||||

self.logger = kwargs.get('logger', Mock())

|

||||

|

||||

# moved here to speed up the launch of Maigret

|

||||

from aiohttp_socks import ProxyConnector

|

||||

|

||||

# make http client session

|

||||

connector = (

|

||||

ProxyConnector.from_url(proxy) if proxy else TCPConnector(ssl=False)

|

||||

)

|

||||

connector.verify_ssl = False

|

||||

self.session = ClientSession(

|

||||

connector=connector, trust_env=True, cookie_jar=cookie_jar

|

||||

)

|

||||

|

||||

def prepare(self, url, headers=None, allow_redirects=True, timeout=0, method='get'):

|

||||

if method == 'get':

|

||||

request_method = self.session.get

|

||||

else:

|

||||

request_method = self.session.head

|

||||

|

||||

future = request_method(

|

||||

url=url,

|

||||

headers=headers,

|

||||

allow_redirects=allow_redirects,

|

||||

timeout=timeout,

|

||||

)

|

||||

|

||||

return future

|

||||

|

||||

async def close(self):

|

||||

await self.session.close()

|

||||

|

||||

async def check(self, future) -> Tuple[str, int, Optional[CheckError]]:

|

||||

html_text = None

|

||||

status_code = 0

|

||||

error: Optional[CheckError] = CheckError("Unknown")

|

||||

|

||||

try:

|

||||

response = await request_future

|

||||

response = await future

|

||||

|

||||

status_code = response.status

|

||||

response_content = await response.content.read()

|

||||

@@ -61,7 +107,7 @@ async def get_response(request_future, logger) -> Tuple[str, int, Optional[Check

|

||||

if status_code == 0:

|

||||

error = CheckError("Connection lost")

|

||||

|

||||

logger.debug(html_text)

|

||||

self.logger.debug(html_text)

|

||||

|

||||

except asyncio.TimeoutError as e:

|

||||

error = CheckError("Request timeout", str(e))

|

||||

@@ -69,7 +115,7 @@ async def get_response(request_future, logger) -> Tuple[str, int, Optional[Check

|

||||

error = CheckError("Connecting failure", str(e))

|

||||

except ServerDisconnectedError as e:

|

||||

error = CheckError("Server disconnected", str(e))

|

||||

except aiohttp.http_exceptions.BadHttpMessage as e:

|

||||

except http_exceptions.BadHttpMessage as e:

|

||||

error = CheckError("HTTP", str(e))

|

||||

except proxy_errors.ProxyError as e:

|

||||

error = CheckError("Proxy", str(e))

|

||||

@@ -78,16 +124,75 @@ async def get_response(request_future, logger) -> Tuple[str, int, Optional[Check

|

||||

except Exception as e:

|

||||

# python-specific exceptions

|

||||

if sys.version_info.minor > 6 and (

|

||||

isinstance(e, ssl.SSLCertVerificationError) or isinstance(e, ssl.SSLError)

|

||||

isinstance(e, ssl.SSLCertVerificationError)

|

||||

or isinstance(e, ssl.SSLError)

|

||||

):

|

||||

error = CheckError("SSL", str(e))

|

||||

else:

|

||||

logger.debug(e, exc_info=True)

|

||||

self.logger.debug(e, exc_info=True)

|

||||

error = CheckError("Unexpected", str(e))

|

||||

|

||||

return str(html_text), status_code, error

|

||||

|

||||

|

||||

class ProxiedAiohttpChecker(SimpleAiohttpChecker):

|

||||

def __init__(self, *args, **kwargs):

|

||||

proxy = kwargs.get('proxy')

|

||||

cookie_jar = kwargs.get('cookie_jar')

|

||||

self.logger = kwargs.get('logger', Mock())

|

||||

|

||||

# moved here to speed up the launch of Maigret

|

||||

from aiohttp_socks import ProxyConnector

|

||||

|

||||

connector = ProxyConnector.from_url(proxy)

|

||||

connector.verify_ssl = False

|

||||

self.session = ClientSession(

|

||||

connector=connector, trust_env=True, cookie_jar=cookie_jar

|

||||

)

|

||||

|

||||

|

||||

class AiodnsDomainResolver(CheckerBase):

|

||||

def __init__(self, *args, **kwargs):

|

||||

loop = asyncio.get_event_loop()

|

||||

self.logger = kwargs.get('logger', Mock())

|

||||

self.resolver = aiodns.DNSResolver(loop=loop)

|

||||

|

||||

def prepare(self, url, headers=None, allow_redirects=True, timeout=0, method='get'):

|

||||

return self.resolver.query(url, 'A')

|

||||

|

||||

async def check(self, future) -> Tuple[str, int, Optional[CheckError]]:

|

||||

status = 404

|

||||

error = None

|

||||

text = ''

|

||||

|

||||

try:

|

||||

res = await future

|

||||

text = str(res[0].host)

|

||||

status = 200

|

||||

except aiodns.error.DNSError:

|

||||

pass

|

||||

except Exception as e:

|

||||

self.logger.error(e, exc_info=True)

|

||||

error = CheckError('DNS resolve error', str(e))

|

||||

|

||||

return text, status, error

|

||||

|

||||

|

||||

class CheckerMock:

|

||||

def __init__(self, *args, **kwargs):

|

||||

pass

|

||||

|

||||

def prepare(self, url, headers=None, allow_redirects=True, timeout=0, method='get'):

|

||||

return None

|

||||

|

||||

async def check(self, future) -> Tuple[str, int, Optional[CheckError]]:

|

||||

await asyncio.sleep(0)

|

||||

return '', 0, None

|

||||

|

||||

async def close(self):

|

||||

return

|

||||

|

||||

|

||||

# TODO: move to separate class

|

||||

def detect_error_page(

|

||||

html_text, status_code, fail_flags, ignore_403

|

||||

@@ -322,7 +427,8 @@ def make_site_result(

|

||||

# workaround to prevent slash errors

|

||||

url = re.sub("(?<!:)/+", "/", url)

|

||||

|

||||

session = options['session']

|

||||

# always clearweb_checker for now

|

||||

checker = options["checkers"][site.protocol]

|

||||

|

||||

# site check is disabled

|

||||

if site.disabled and not options['forced']:

|

||||

@@ -381,12 +487,12 @@ def make_site_result(

|

||||

# In most cases when we are detecting by status code,

|

||||

# it is not necessary to get the entire body: we can

|

||||

# detect fine with just the HEAD response.

|

||||

request_method = session.head

|

||||

request_method = 'head'

|

||||

else:

|

||||

# Either this detect method needs the content associated

|

||||

# with the GET response, or this specific website will

|

||||

# not respond properly unless we request the whole page.

|

||||

request_method = session.get

|

||||

request_method = 'get'

|

||||

|

||||

if site.check_type == "response_url":

|

||||

# Site forwards request to a different URL if username not

|

||||

@@ -398,7 +504,8 @@ def make_site_result(

|

||||

# The final result of the request will be what is available.

|

||||

allow_redirects = True

|

||||

|

||||

future = request_method(

|

||||

future = checker.prepare(

|

||||

method=request_method,

|

||||

url=url_probe,

|

||||

headers=headers,

|

||||

allow_redirects=allow_redirects,

|

||||

@@ -407,6 +514,7 @@ def make_site_result(

|

||||

|

||||

# Store future request object in the results object

|

||||

results_site["future"] = future

|

||||

results_site["checker"] = checker

|

||||

|

||||

return results_site

|

||||

|

||||

@@ -419,7 +527,9 @@ async def check_site_for_username(

|

||||

if not future:

|

||||

return site.name, default_result

|

||||

|

||||

response = await get_response(request_future=future, logger=logger)

|

||||

checker = default_result["checker"]

|

||||

|

||||

response = await checker.check(future=future)

|

||||

|

||||

response_result = process_site_result(

|

||||

response, query_notify, logger, default_result, site

|

||||

@@ -430,9 +540,9 @@ async def check_site_for_username(

|

||||

return site.name, response_result

|

||||

|

||||

|

||||

async def debug_ip_request(session, logger):

|

||||

future = session.get(url="https://icanhazip.com")

|

||||

ip, status, check_error = await get_response(future, logger)

|

||||

async def debug_ip_request(checker, logger):

|

||||

future = checker.prepare(url="https://icanhazip.com")

|

||||

ip, status, check_error = await checker.check(future)

|

||||

if ip:

|

||||

logger.debug(f"My IP is: {ip.strip()}")

|

||||

else:

|

||||

@@ -456,6 +566,8 @@ async def maigret(

|

||||

logger,

|

||||

query_notify=None,

|

||||

proxy=None,

|

||||

tor_proxy=None,

|

||||

i2p_proxy=None,

|

||||

timeout=3,

|

||||

is_parsing_enabled=False,

|

||||

id_type="username",

|

||||

@@ -465,6 +577,7 @@ async def maigret(

|

||||

no_progressbar=False,

|

||||

cookies=None,

|

||||

retries=0,

|

||||

check_domains=False,

|

||||

) -> QueryResultWrapper:

|

||||

"""Main search func

|

||||

|

||||

@@ -508,23 +621,36 @@ async def maigret(

|

||||

|

||||

query_notify.start(username, id_type)

|

||||

|

||||

# make http client session

|

||||

connector = (

|

||||

ProxyConnector.from_url(proxy) if proxy else aiohttp.TCPConnector(ssl=False)

|

||||

)

|

||||

connector.verify_ssl = False

|

||||

|

||||

cookie_jar = None

|

||||

if cookies:

|

||||

logger.debug(f"Using cookies jar file {cookies}")

|

||||

cookie_jar = await import_aiohttp_cookies(cookies)

|

||||

cookie_jar = import_aiohttp_cookies(cookies)

|

||||

|

||||

session = aiohttp.ClientSession(

|

||||

connector=connector, trust_env=True, cookie_jar=cookie_jar

|

||||

clearweb_checker = SimpleAiohttpChecker(

|

||||

proxy=proxy, cookie_jar=cookie_jar, logger=logger

|

||||

)

|

||||

|

||||

# TODO

|

||||

tor_checker = CheckerMock()

|

||||

if tor_proxy:

|

||||

tor_checker = ProxiedAiohttpChecker( # type: ignore

|

||||

proxy=tor_proxy, cookie_jar=cookie_jar, logger=logger

|

||||

)

|

||||

|

||||

# TODO

|

||||

i2p_checker = CheckerMock()

|

||||

if i2p_proxy:

|

||||

i2p_checker = ProxiedAiohttpChecker( # type: ignore

|

||||

proxy=i2p_proxy, cookie_jar=cookie_jar, logger=logger

|

||||

)

|

||||

|

||||

# TODO

|

||||

dns_checker = CheckerMock()

|

||||

if check_domains:

|

||||

dns_checker = AiodnsDomainResolver(logger=logger) # type: ignore

|

||||

|

||||

if logger.level == logging.DEBUG:

|

||||

await debug_ip_request(session, logger)

|

||||

await debug_ip_request(clearweb_checker, logger)

|

||||

|

||||

# setup parallel executor

|

||||

executor: Optional[AsyncExecutor] = None

|

||||

@@ -538,7 +664,12 @@ async def maigret(

|

||||

# make options objects for all the requests

|

||||

options: QueryOptions = {}

|

||||

options["cookies"] = cookie_jar

|

||||

options["session"] = session

|

||||

options["checkers"] = {

|

||||

'': clearweb_checker,

|

||||

'tor': tor_checker,

|

||||

'dns': dns_checker,

|

||||

'i2p': i2p_checker,

|

||||

}

|

||||

options["parsing"] = is_parsing_enabled

|

||||

options["timeout"] = timeout

|

||||

options["id_type"] = id_type

|

||||

@@ -591,7 +722,11 @@ async def maigret(

|

||||

)

|

||||

|

||||

# closing http client session

|

||||

await session.close()

|

||||

await clearweb_checker.close()

|

||||

if tor_proxy:

|

||||

await tor_checker.close()

|

||||

if i2p_proxy:

|

||||

await i2p_checker.close()

|

||||

|

||||

# notify caller that all queries are finished

|

||||

query_notify.finish()

|

||||

@@ -625,7 +760,13 @@ def timeout_check(value):

|

||||

|

||||

|

||||

async def site_self_check(

|

||||

site: MaigretSite, logger, semaphore, db: MaigretDatabase, silent=False

|

||||

site: MaigretSite,

|

||||

logger,

|

||||

semaphore,

|

||||

db: MaigretDatabase,

|

||||

silent=False,

|

||||

tor_proxy=None,

|

||||

i2p_proxy=None,

|

||||

):

|

||||

changes = {

|

||||

"disabled": False,

|

||||

@@ -649,6 +790,8 @@ async def site_self_check(

|

||||

forced=True,

|

||||

no_progressbar=True,

|

||||

retries=1,

|

||||

tor_proxy=tor_proxy,

|

||||

i2p_proxy=i2p_proxy,

|

||||

)

|

||||

|

||||

# don't disable entries with other ids types

|

||||

@@ -658,6 +801,8 @@ async def site_self_check(

|

||||

changes["disabled"] = True

|

||||

continue

|

||||

|

||||

logger.debug(results_dict)

|

||||

|

||||

result = results_dict[site.name]["status"]

|

||||

|

||||

site_status = result.status

|

||||

@@ -696,7 +841,13 @@ async def site_self_check(

|

||||

|

||||

|

||||

async def self_check(

|

||||

db: MaigretDatabase, site_data: dict, logger, silent=False, max_connections=10

|

||||

db: MaigretDatabase,

|

||||

site_data: dict,

|

||||

logger,

|

||||

silent=False,

|

||||

max_connections=10,

|

||||

tor_proxy=None,

|

||||

i2p_proxy=None,

|

||||

) -> bool:

|

||||

sem = asyncio.Semaphore(max_connections)

|

||||

tasks = []

|

||||

@@ -708,7 +859,9 @@ async def self_check(

|

||||

disabled_old_count = disabled_count(all_sites.values())

|

||||

|

||||

for _, site in all_sites.items():

|

||||

check_coro = site_self_check(site, logger, sem, db, silent)

|

||||

check_coro = site_self_check(

|

||||

site, logger, sem, db, silent, tor_proxy, i2p_proxy

|

||||

)

|

||||

future = asyncio.ensure_future(check_coro)

|

||||

tasks.append(future)

|

||||

|

||||

|

||||

+74

-24

@@ -1,7 +1,6 @@

|

||||

"""

|

||||

Maigret main module

|

||||

"""

|

||||

import aiohttp

|

||||

import asyncio

|

||||

import logging

|

||||

import os

|

||||

@@ -10,8 +9,7 @@ import platform

|

||||

from argparse import ArgumentParser, RawDescriptionHelpFormatter

|

||||

from typing import List, Tuple

|

||||

|

||||

import requests

|

||||

from socid_extractor import extract, parse, __version__ as socid_version

|

||||

from socid_extractor import extract, parse

|

||||

|

||||

from .__version__ import __version__

|

||||

from .checking import (

|

||||

@@ -33,11 +31,14 @@ from .report import (

|

||||

SUPPORTED_JSON_REPORT_FORMATS,

|

||||

save_json_report,

|

||||

get_plaintext_report,

|

||||

sort_report_by_data_points,

|

||||

save_graph_report,

|

||||

)

|

||||

from .sites import MaigretDatabase

|

||||

from .submit import submit_dialog

|

||||

from .submit import Submitter

|

||||

from .types import QueryResultWrapper

|

||||

from .utils import get_dict_ascii_tree

|

||||

from .settings import Settings

|

||||

|

||||

|

||||

def notify_about_errors(search_results: QueryResultWrapper, query_notify):

|

||||

@@ -60,17 +61,6 @@ def notify_about_errors(search_results: QueryResultWrapper, query_notify):

|

||||

)

|

||||

|

||||

|

||||

def extract_ids_from_url(url: str, db: MaigretDatabase) -> dict:

|

||||

results = {}

|

||||

for s in db.sites:

|

||||

result = s.extract_id_from_url(url)

|

||||

if not result:

|

||||

continue

|

||||

_id, _type = result

|

||||

results[_id] = _type

|

||||

return results

|

||||

|

||||

|

||||

def extract_ids_from_page(url, logger, timeout=5) -> dict:

|

||||

results = {}

|

||||

# url, headers

|

||||

@@ -116,18 +106,22 @@ def extract_ids_from_results(results: QueryResultWrapper, db: MaigretDatabase) -

|

||||

ids_results[u] = utype

|

||||

|

||||

for url in dictionary.get('ids_links', []):

|

||||

ids_results.update(extract_ids_from_url(url, db))

|

||||

ids_results.update(db.extract_ids_from_url(url))

|

||||

|

||||

return ids_results

|

||||

|

||||

|

||||

def setup_arguments_parser():

|

||||

from aiohttp import __version__ as aiohttp_version

|

||||

from requests import __version__ as requests_version

|

||||

from socid_extractor import __version__ as socid_version

|

||||

|

||||

version_string = '\n'.join(

|

||||

[

|

||||

f'%(prog)s {__version__}',

|

||||

f'Socid-extractor: {socid_version}',

|

||||

f'Aiohttp: {aiohttp.__version__}',

|

||||

f'Requests: {requests.__version__}',

|

||||

f'Aiohttp: {aiohttp_version}',

|

||||

f'Requests: {requests_version}',

|

||||

f'Python: {platform.python_version()}',

|

||||

]

|

||||

)

|

||||

@@ -203,7 +197,7 @@ def setup_arguments_parser():

|

||||

metavar="DB_FILE",

|

||||

dest="db_file",

|

||||

default=None,

|

||||

help="Load Maigret database from a JSON file or an online, valid, JSON file.",

|

||||

help="Load Maigret database from a JSON file or HTTP web resource.",

|

||||

)

|

||||

parser.add_argument(

|

||||

"--cookies-jar-file",

|

||||

@@ -238,6 +232,26 @@ def setup_arguments_parser():

|

||||

default=None,

|

||||

help="Make requests over a proxy. e.g. socks5://127.0.0.1:1080",

|

||||

)

|

||||

parser.add_argument(

|

||||

"--tor-proxy",

|

||||

metavar='TOR_PROXY_URL',

|

||||

action="store",

|

||||

default='socks5://127.0.0.1:9050',

|

||||

help="Specify URL of your Tor gateway. Default is socks5://127.0.0.1:9050",

|

||||

)

|

||||

parser.add_argument(

|

||||

"--i2p-proxy",

|

||||

metavar='I2P_PROXY_URL',

|

||||

action="store",

|

||||

default='http://127.0.0.1:4444',

|

||||

help="Specify URL of your I2P gateway. Default is http://127.0.0.1:4444",

|

||||

)

|

||||

parser.add_argument(

|

||||

"--with-domains",

|

||||

action="store_true",

|

||||

default=False,

|

||||

help="Enable (experimental) feature of checking domains on usernames.",

|

||||

)

|

||||

|

||||

filter_group = parser.add_argument_group(

|

||||

'Site filtering', 'Options to set site search scope'

|

||||

@@ -409,6 +423,14 @@ def setup_arguments_parser():

|

||||

default=False,

|

||||

help="Generate a PDF report (general report on all usernames).",

|

||||

)

|

||||

report_group.add_argument(

|

||||

"-G",

|

||||

"--graph",

|

||||

action="store_true",

|

||||

dest="graph",

|

||||

default=False,

|

||||

help="Generate a graph report (general report on all usernames).",

|

||||

)

|

||||

report_group.add_argument(

|

||||

"-J",

|

||||

"--json",

|

||||

@@ -420,6 +442,13 @@ def setup_arguments_parser():

|

||||

help=f"Generate a JSON report of specific type: {', '.join(SUPPORTED_JSON_REPORT_FORMATS)}"

|

||||

" (one report per username).",

|

||||

)

|

||||

|

||||

parser.add_argument(

|

||||

"--reports-sorting",

|

||||

default='default',

|

||||

choices=('default', 'data'),

|

||||

help="Method of results sorting in reports (default: in order of getting the result)",

|

||||

)

|

||||

return parser

|

||||

|

||||

|

||||

@@ -468,6 +497,12 @@ async def main():

|

||||

if args.tags:

|

||||

args.tags = list(set(str(args.tags).split(',')))

|

||||

|

||||

settings = Settings(

|

||||

os.path.join(

|

||||

os.path.dirname(os.path.realpath(__file__)), "resources/settings.json"

|

||||

)

|

||||

)

|

||||

|

||||

if args.db_file is None:

|

||||

args.db_file = os.path.join(

|

||||

os.path.dirname(os.path.realpath(__file__)), "resources/data.json"

|

||||

@@ -486,7 +521,7 @@ async def main():

|

||||

)

|

||||

|

||||

# Create object with all information about sites we are aware of.

|

||||

db = MaigretDatabase().load_from_file(args.db_file)

|

||||

db = MaigretDatabase().load_from_path(args.db_file)

|

||||

get_top_sites_for_id = lambda x: db.ranked_sites_dict(

|

||||

top=args.top_sites,

|

||||

tags=args.tags,

|

||||

@@ -498,9 +533,8 @@ async def main():

|

||||

site_data = get_top_sites_for_id(args.id_type)

|

||||

|

||||

if args.new_site_to_submit:

|

||||

is_submitted = await submit_dialog(

|

||||

db, args.new_site_to_submit, args.cookie_file, logger

|

||||

)

|

||||

submitter = Submitter(db=db, logger=logger, settings=settings)

|

||||

is_submitted = await submitter.dialog(args.new_site_to_submit, args.cookie_file)

|

||||

if is_submitted:

|

||||

db.save_to_file(args.db_file)

|

||||

|

||||

@@ -508,7 +542,12 @@ async def main():

|

||||

if args.self_check:

|

||||

print('Maigret sites database self-checking...')

|

||||

is_need_update = await self_check(

|

||||

db, site_data, logger, max_connections=args.connections

|

||||

db,

|

||||

site_data,

|

||||

logger,

|

||||

max_connections=args.connections,

|

||||

tor_proxy=args.tor_proxy,

|

||||

i2p_proxy=args.i2p_proxy,

|

||||

)

|

||||

if is_need_update:

|

||||

if input('Do you want to save changes permanently? [Yn]\n').lower() in (

|

||||

@@ -584,6 +623,8 @@ async def main():

|

||||

site_dict=dict(sites_to_check),

|

||||

query_notify=query_notify,

|

||||

proxy=args.proxy,

|

||||

tor_proxy=args.tor_proxy,

|

||||

i2p_proxy=args.i2p_proxy,

|

||||

timeout=args.timeout,

|

||||

is_parsing_enabled=parsing_enabled,

|

||||

id_type=id_type,

|

||||

@@ -594,10 +635,14 @@ async def main():

|

||||

max_connections=args.connections,

|

||||

no_progressbar=args.no_progressbar,

|

||||

retries=args.retries,

|

||||

check_domains=args.with_domains,

|

||||

)

|

||||

|

||||

notify_about_errors(results, query_notify)

|

||||

|

||||

if args.reports_sorting == "data":

|

||||

results = sort_report_by_data_points(results)

|

||||

|

||||

general_results.append((username, id_type, results))

|

||||

|

||||

# TODO: tests

|

||||

@@ -648,6 +693,11 @@ async def main():

|

||||

save_pdf_report(filename, report_context)

|

||||

query_notify.warning(f'PDF report on all usernames saved in {filename}')

|

||||

|

||||

if args.graph:

|

||||

filename = report_filepath_tpl.format(username=username, postfix='.html')

|

||||

save_graph_report(filename, general_results, db)

|

||||

query_notify.warning(f'Graph report on all usernames saved in {filename}')

|

||||

|

||||

text_report = get_plaintext_report(report_context)

|

||||

if text_report:

|

||||

query_notify.info('Short text report:')

|

||||

|

||||

+170

-11

@@ -1,3 +1,4 @@

|

||||

import ast

|

||||

import csv

|

||||

import io

|

||||

import json

|

||||

@@ -6,13 +7,13 @@ import os

|

||||

from datetime import datetime

|

||||

from typing import Dict, Any

|

||||

|

||||

import pycountry

|

||||

import xmind

|

||||

from dateutil.parser import parse as parse_datetime_str

|

||||

from jinja2 import Template

|

||||

from xhtml2pdf import pisa

|

||||

|

||||

from .checking import SUPPORTED_IDS

|

||||

from .result import QueryStatus

|

||||

from .sites import MaigretDatabase

|

||||

from .utils import is_country_tag, CaseConverter, enrich_link_str

|

||||

|

||||

SUPPORTED_JSON_REPORT_FORMATS = [

|

||||

@@ -36,6 +37,18 @@ def filter_supposed_data(data):

|

||||

return filtered_supposed_data

|

||||

|

||||

|

||||

def sort_report_by_data_points(results):

|

||||

return dict(

|

||||

sorted(

|

||||

results.items(),

|

||||

key=lambda x: len(

|

||||

(x[1].get('status') and x[1]['status'].ids_data or {}).keys()

|

||||

),

|

||||

reverse=True,

|

||||

)

|

||||

)

|

||||

|

||||

|

||||

"""

|

||||

REPORTS SAVING

|

||||

"""

|

||||

@@ -61,6 +74,10 @@ def save_html_report(filename: str, context: dict):

|

||||

def save_pdf_report(filename: str, context: dict):

|

||||

template, css = generate_report_template(is_pdf=True)

|

||||

filled_template = template.render(**context)

|

||||

|

||||

# moved here to speed up the launch of Maigret

|

||||

from xhtml2pdf import pisa

|

||||

|

||||

with open(filename, "w+b") as f:

|

||||

pisa.pisaDocument(io.StringIO(filled_template), dest=f, default_css=css)

|

||||

|

||||

@@ -70,6 +87,131 @@ def save_json_report(filename: str, username: str, results: dict, report_type: s

|

||||

generate_json_report(username, results, f, report_type=report_type)

|

||||

|

||||

|

||||

class MaigretGraph:

|

||||

other_params = {'size': 10, 'group': 3}

|

||||

site_params = {'size': 15, 'group': 2}

|

||||

username_params = {'size': 20, 'group': 1}

|

||||

|

||||

def __init__(self, graph):

|

||||

self.G = graph

|

||||

|

||||

def add_node(self, key, value):

|

||||

node_name = f'{key}: {value}'

|

||||

|

||||

params = self.other_params

|

||||

if key in SUPPORTED_IDS:

|

||||

params = self.username_params

|

||||

elif value.startswith('http'):

|

||||

params = self.site_params

|

||||

|

||||

self.G.add_node(node_name, title=node_name, **params)

|

||||

|

||||

if value != value.lower():

|

||||

normalized_node_name = self.add_node(key, value.lower())

|

||||

self.link(node_name, normalized_node_name)

|

||||

|

||||

return node_name

|

||||

|

||||

def link(self, node1_name, node2_name):

|

||||

self.G.add_edge(node1_name, node2_name, weight=2)

|

||||

|

||||

|

||||

def save_graph_report(filename: str, username_results: list, db: MaigretDatabase):

|

||||

# moved here to speed up the launch of Maigret

|

||||

import networkx as nx

|

||||

|

||||

G = nx.Graph()

|

||||

graph = MaigretGraph(G)

|

||||

|

||||

for username, id_type, results in username_results:

|

||||

username_node_name = graph.add_node(id_type, username)

|

||||

|

||||

for website_name in results:

|

||||

dictionary = results[website_name]

|

||||

# TODO: fix no site data issue

|

||||

if not dictionary:

|

||||

continue

|

||||

|

||||

if dictionary.get("is_similar"):

|

||||

continue

|

||||

|

||||

status = dictionary.get("status")

|

||||

if not status: # FIXME: currently in case of timeout

|

||||

continue

|

||||

|

||||

if dictionary["status"].status != QueryStatus.CLAIMED:

|

||||

continue

|

||||

|

||||

site_fallback_name = dictionary.get(

|

||||

'url_user', f'{website_name}: {username.lower()}'

|

||||

)

|

||||

# site_node_name = dictionary.get('url_user', f'{website_name}: {username.lower()}')

|

||||

site_node_name = graph.add_node('site', site_fallback_name)

|

||||

graph.link(username_node_name, site_node_name)

|

||||

|

||||

def process_ids(parent_node, ids):

|

||||

for k, v in ids.items():

|

||||

if k.endswith('_count') or k.startswith('is_') or k.endswith('_at'):

|

||||

continue

|

||||

if k in 'image':

|

||||

continue

|

||||

|

||||

v_data = v

|

||||

if v.startswith('['):

|

||||

try:

|

||||

v_data = ast.literal_eval(v)

|

||||

except Exception as e:

|

||||

logging.error(e)

|

||||

|

||||

# value is a list

|

||||

if isinstance(v_data, list):

|

||||

list_node_name = graph.add_node(k, site_fallback_name)

|

||||

for vv in v_data:

|

||||

data_node_name = graph.add_node(vv, site_fallback_name)

|

||||

graph.link(list_node_name, data_node_name)

|

||||

|

||||

add_ids = {

|

||||

a: b for b, a in db.extract_ids_from_url(vv).items()

|

||||

}

|

||||

if add_ids:

|

||||

process_ids(data_node_name, add_ids)

|

||||

else:

|

||||

# value is just a string

|

||||

# ids_data_name = f'{k}: {v}'

|

||||

# if ids_data_name == parent_node:

|

||||

# continue

|

||||

|

||||

ids_data_name = graph.add_node(k, v)

|

||||

# G.add_node(ids_data_name, size=10, title=ids_data_name, group=3)

|

||||

graph.link(parent_node, ids_data_name)

|

||||

|

||||

# check for username

|

||||

if 'username' in k or k in SUPPORTED_IDS:

|

||||

new_username_node_name = graph.add_node('username', v)

|

||||

graph.link(ids_data_name, new_username_node_name)

|

||||

|

||||

add_ids = {k: v for v, k in db.extract_ids_from_url(v).items()}

|

||||

if add_ids:

|

||||

process_ids(ids_data_name, add_ids)

|

||||

|

||||

if status.ids_data:

|

||||

process_ids(site_node_name, status.ids_data)

|

||||

|

||||

nodes_to_remove = []

|

||||

for node in G.nodes:

|

||||

if len(str(node)) > 100:

|

||||

nodes_to_remove.append(node)

|

||||

|

||||

[G.remove_node(node) for node in nodes_to_remove]

|

||||

|

||||

# moved here to speed up the launch of Maigret

|

||||

from pyvis.network import Network

|

||||

|

||||

nt = Network(notebook=True, height="750px", width="100%")

|

||||

nt.from_nx(G)

|

||||

nt.show(filename)

|

||||

|

||||

|

||||

def get_plaintext_report(context: dict) -> str:

|

||||

output = (context['brief'] + " ").replace('. ', '.\n')

|

||||

interests = list(map(lambda x: x[0], context.get('interests_tuple_list', [])))

|

||||

@@ -118,6 +260,9 @@ def generate_report_context(username_results: list):

|

||||

|

||||

first_seen = None

|

||||

|

||||

# moved here to speed up the launch of Maigret

|

||||

import pycountry

|

||||

|

||||

for username, id_type, results in username_results:

|

||||

found_accounts = 0

|

||||

new_ids = []

|

||||

@@ -243,14 +388,18 @@ def generate_csv_report(username: str, results: dict, csvfile):

|

||||

["username", "name", "url_main", "url_user", "exists", "http_status"]

|

||||

)

|

||||

for site in results:

|

||||

# TODO: fix the reason

|

||||

status = 'Unknown'

|

||||

if "status" in results[site]:

|

||||

status = str(results[site]["status"].status)

|

||||

writer.writerow(

|

||||

[

|

||||

username,

|

||||

site,

|

||||

results[site]["url_main"],

|

||||

results[site]["url_user"],

|

||||

str(results[site]["status"].status),

|

||||

results[site]["http_status"],

|

||||

results[site].get("url_main", ""),

|

||||

results[site].get("url_user", ""),

|

||||

status,

|

||||

results[site].get("http_status", 0),

|

||||

]

|

||||

)

|

||||

|

||||

@@ -262,7 +411,10 @@ def generate_txt_report(username: str, results: dict, file):

|

||||

# TODO: fix no site data issue

|

||||

if not dictionary:

|

||||

continue

|

||||

if dictionary.get("status").status == QueryStatus.CLAIMED:

|

||||

if (

|

||||

dictionary.get("status")

|

||||

and dictionary["status"].status == QueryStatus.CLAIMED

|

||||

):

|

||||

exists_counter += 1

|

||||

file.write(dictionary["url_user"] + "\n")

|

||||

file.write(f"Total Websites Username Detected On : {exists_counter}")

|

||||

@@ -275,14 +427,18 @@ def generate_json_report(username: str, results: dict, file, report_type):

|

||||

for sitename in results:

|

||||

site_result = results[sitename]

|

||||

# TODO: fix no site data issue

|

||||

if not site_result or site_result.get("status").status != QueryStatus.CLAIMED:

|

||||

if not site_result or not site_result.get("status"):

|

||||

continue

|

||||

|

||||

if site_result["status"].status != QueryStatus.CLAIMED:

|

||||

continue

|

||||

|

||||

data = dict(site_result)

|

||||

data["status"] = data["status"].json()

|

||||

data["site"] = data["site"].json

|

||||

if "future" in data:

|

||||

del data["future"]

|

||||

for field in ["future", "checker"]:

|

||||

if field in data:

|

||||

del data[field]

|

||||

|

||||

if is_report_per_line:

|

||||

data["sitename"] = sitename

|

||||

@@ -331,8 +487,11 @@ def design_xmind_sheet(sheet, username, results):

|

||||

|

||||

for website_name in results:

|

||||

dictionary = results[website_name]

|

||||

if not dictionary:

|

||||

continue

|

||||

result_status = dictionary.get("status")

|

||||

if result_status.status != QueryStatus.CLAIMED:

|

||||

# TODO: fix the reason

|

||||

if not result_status or result_status.status != QueryStatus.CLAIMED:

|

||||

continue

|

||||

|

||||

stripped_tags = list(map(lambda x: x.strip(), result_status.tags))

|

||||

|

||||

+774

-54

File diff suppressed because it is too large

Load Diff

@@ -0,0 +1,17 @@

|

||||

{

|

||||

"presence_strings": [

|

||||

"username",

|

||||

"not found",

|

||||

"пользователь",

|

||||

"profile",

|

||||

"lastname",

|

||||

"firstname",

|

||||

"biography",

|

||||

"birthday",

|

||||

"репутация",

|

||||

"информация",

|

||||

"e-mail"

|

||||

],

|

||||

"supposed_usernames": [

|

||||

"alex", "god", "admin", "red", "blue", "john"]

|

||||

}

|

||||

@@ -68,7 +68,7 @@

|

||||

<div class="row-mb">

|

||||

<div class="col-md">

|

||||

<div class="card flex-md-row mb-4 box-shadow h-md-250">

|

||||

<img class="card-img-right flex-auto d-md-block" alt="Photo" style="width: 200px; height: 200px; object-fit: scale-down;" src="{{ v.status.ids_data.image or 'https://i.imgur.com/040fmbw.png' }}" data-holder-rendered="true">

|

||||

<img class="card-img-right flex-auto d-md-block" alt="Photo" style="width: 200px; height: 200px; object-fit: scale-down;" src="{{ v.status and v.status.ids_data and v.status.ids_data.image or 'https://i.imgur.com/040fmbw.png' }}" data-holder-rendered="true">

|

||||

<div class="card-body d-flex flex-column align-items-start" style="padding-top: 0;">

|

||||

<h3 class="mb-0" style="padding-top: 1rem;">

|

||||

<a class="text-dark" href="{{ v.url_main }}" target="_blank">{{ k }}</a>

|

||||

|

||||

@@ -0,0 +1,29 @@

|

||||

import json

|

||||

|

||||

|

||||

class Settings:

|

||||

presence_strings: list

|

||||

supposed_usernames: list

|

||||

|

||||

def __init__(self, filename):

|

||||

data = {}

|

||||

|

||||

try:

|

||||

with open(filename, "r", encoding="utf-8") as file:

|

||||

try:

|

||||

data = json.load(file)

|

||||

except Exception as error:

|

||||

raise ValueError(

|

||||

f"Problem with parsing json contents of "

|

||||

f"settings file '{filename}': {str(error)}."

|

||||

)

|

||||

except FileNotFoundError as error:

|

||||

raise FileNotFoundError(

|

||||

f"Problem while attempting to access settings file '{filename}'."

|

||||

) from error

|

||||

|

||||

self.__dict__.update(data)

|

||||

|

||||

@property

|

||||

def json(self):

|

||||

return self.__dict__

|

||||

+40

-66

@@ -9,64 +9,6 @@ import requests

|

||||

|

||||

from .utils import CaseConverter, URLMatcher, is_country_tag

|

||||

|

||||

# TODO: move to data.json

|

||||

SUPPORTED_TAGS = [

|

||||

"gaming",

|

||||

"coding",

|

||||

"photo",

|

||||

"music",

|

||||

"blog",

|

||||

"finance",

|

||||

"freelance",

|

||||

"dating",

|

||||

"tech",

|

||||

"forum",

|

||||

"porn",

|

||||

"erotic",

|

||||

"webcam",

|

||||

"video",

|

||||

"movies",

|

||||

"hacking",

|

||||

"art",

|

||||

"discussion",

|

||||

"sharing",

|

||||

"writing",

|

||||

"wiki",

|

||||

"business",

|

||||

"shopping",

|

||||

"sport",

|

||||

"books",

|

||||

"news",

|

||||

"documents",

|

||||

"travel",

|

||||

"maps",

|

||||

"hobby",

|

||||

"apps",

|

||||

"classified",

|

||||

"career",

|

||||

"geosocial",

|

||||

"streaming",

|

||||

"education",

|

||||

"networking",

|

||||

"torrent",

|

||||

"science",

|

||||

"medicine",

|

||||

"reading",

|

||||

"stock",

|

||||

"messaging",

|

||||

"trading",

|

||||

"links",

|

||||

"fashion",

|

||||

"tasks",

|

||||

"military",

|

||||

"auto",

|

||||

"gambling",

|

||||

"cybercriminal",